Modern Salesforce integrations must support far more than simple data exchange. They are expected to power real-time customer experiences, coordinate actions across multiple systems, and operate reliably at enterprise scale—all while meeting strict security and compliance requirements. Choosing the right integration approach is therefore a critical architectural decision, not an implementation detail. Consider a common enterprise scenario. A customer completes a purchase in a mobile app, triggering a real-time eligibility check for a personalized offer. At the same time, transaction data must be recorded in an ERP system, customer attributes updated in Salesforce, and analytics streamed to a data lake—without introducing latency, data duplication, or compliance risk. Scenarios like this are now typical in modern Salesforce environments, where Salesforce rarely operates in isolation and must integrate seamlessly with a broader ecosystem of applications and data platforms. This guide exists to help architects and developers design those integrations with clarity and confidence. Rather than focusing on point-to-point implementations, it presents a set of proven integration patterns that address recurring enterprise scenarios—such as process orchestration, data synchronization, and real-time data access. Each pattern emphasizes architectural intent, trade-offs, and execution models, enabling teams to make informed design decisions that scale and endure. Within this document, you’ll find:

- Integration patterns that represent common enterprise “archetypes” across process, data, and virtual access scenarios

- A pattern selection framework to help identify the right approach based on integration intent and execution timing

- Practical guidance on scalability, resiliency, governance, and security considerations

- Best practices drawn from real-world Salesforce and Data 360 implementations

The goal of this document is to provide a shared architectural language for integration, helping teams design solutions that balance performance, flexibility, and trust while aligning with enterprise data and governance strategies.

This document is for designers and architects who need to integrate data from other applications in their enterprise with Salesforce Data 360 (formerly Data Cloud). This content is a distillation of many successful implementations by Salesforce architects and partners. To become familiar with integration capabilities and options available for large-scale adoption of Data 360, read Pattern Summary and Pattern Selection Guide sections below . Architects and developers should consider these pattern details and best practices during the design and implementation phase of data interaction projects into Data 360. If implemented properly, these patterns enable you to get to production as fast as possible and have the most stable, scalable, and maintenance-free set of applications possible. Salesforce’s own consulting architects use these patterns as reference points during architectural reviews and actively maintain and improve them. As with all patterns, this content covers most integration scenarios, but not all. While Salesforce allows for user interface (UI) integration,-mashups, for example, such integration is outside the scope of this document.

Each integration pattern follows a consistent structure. This provides consistency in the information provided in each pattern and also makes it easier to compare patterns.

- Name: The pattern identifier that also indicates the type of integration contained in the pattern.

- Context: The overall integration scenario that the pattern addresses. Context provides information about what users are trying to accomplish and how the application behaves to support the requirements.

- Problem: Expressed as a question, this is the scenario that the pattern is designed to solve. Read this section to understand if the pattern is appropriate for your integration scenario.

- Forces: The constraints and circumstances that make the stated scenario difficult to solve.

- Solution: The recommended way to solve the integration scenario.

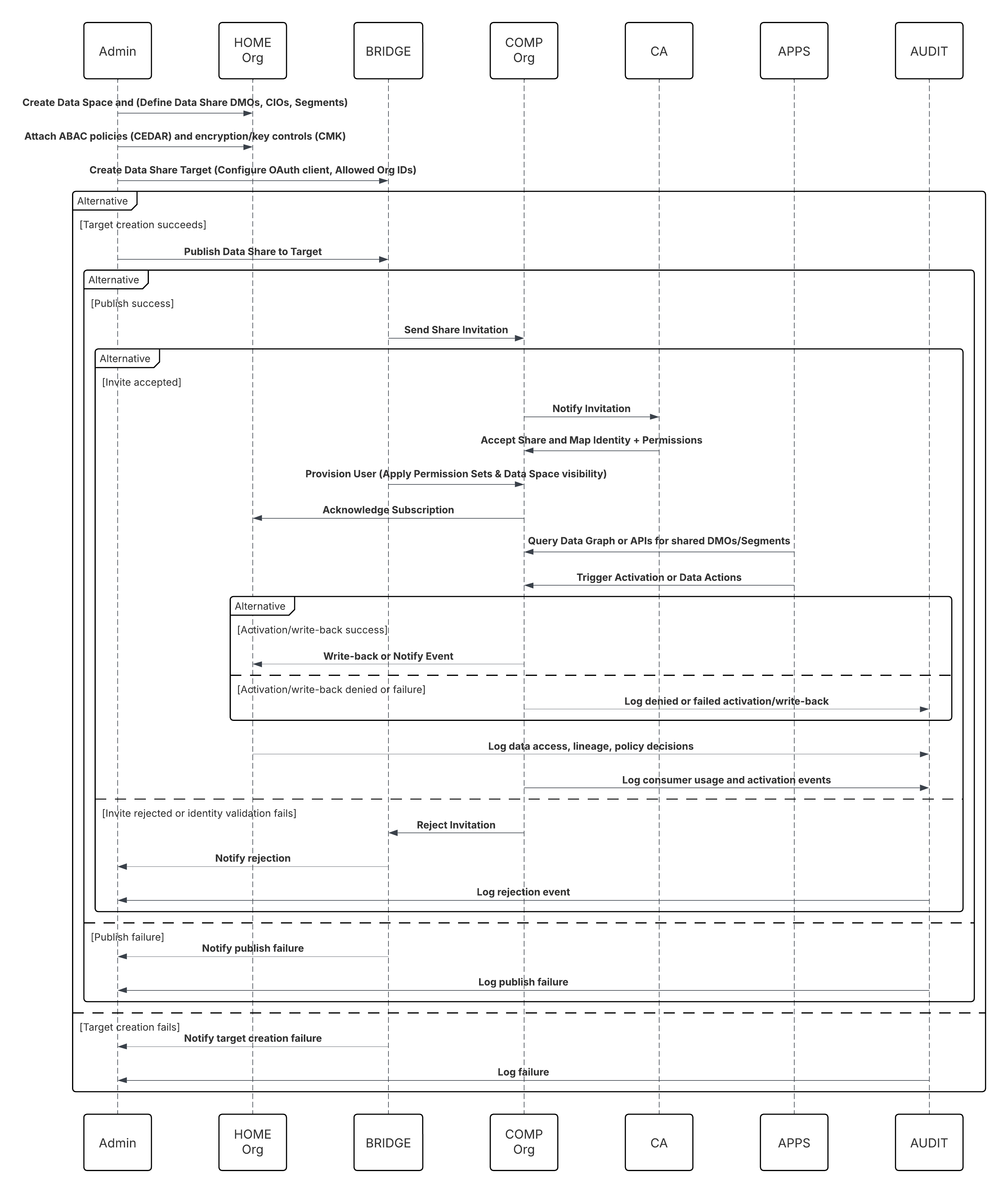

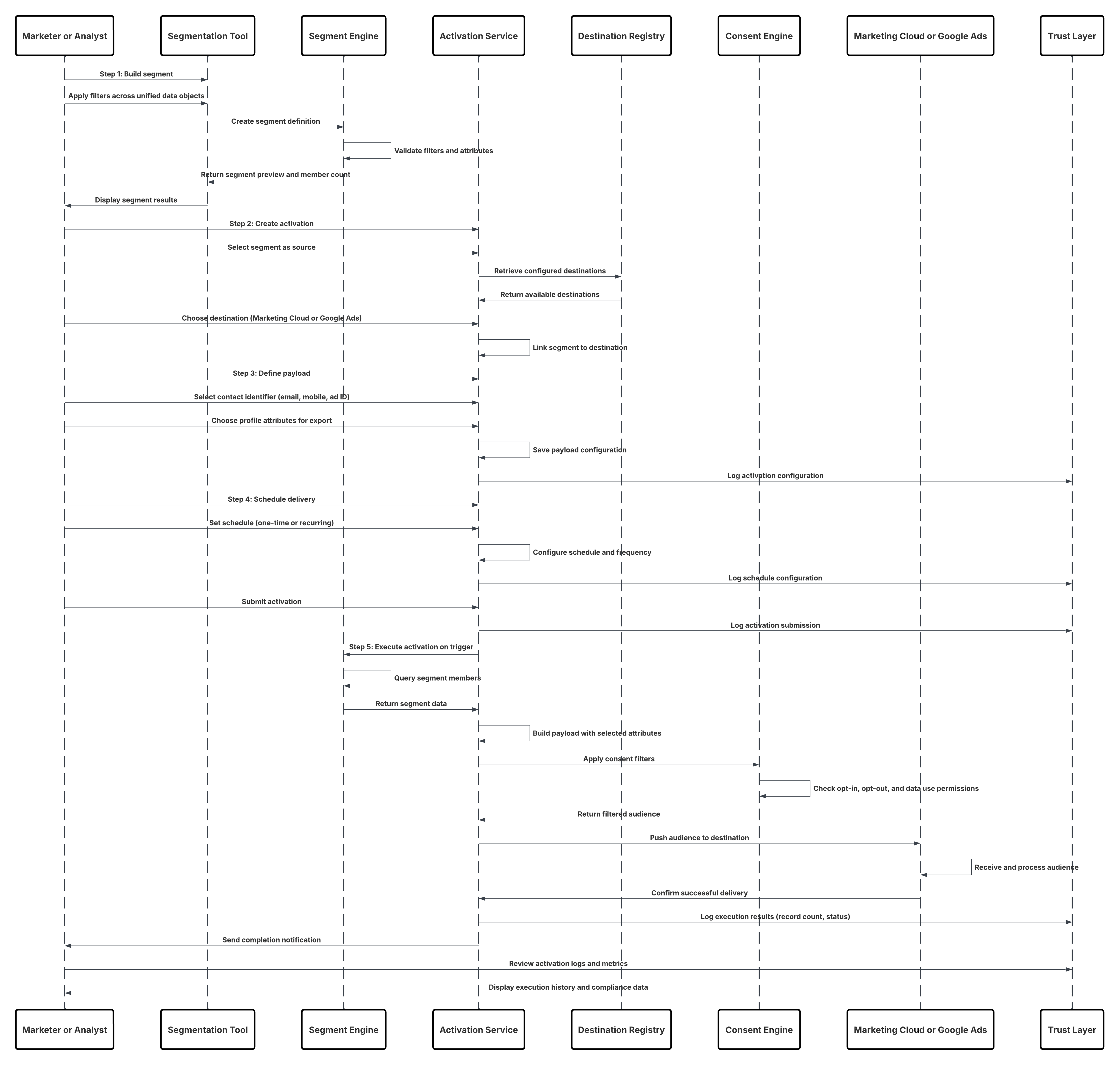

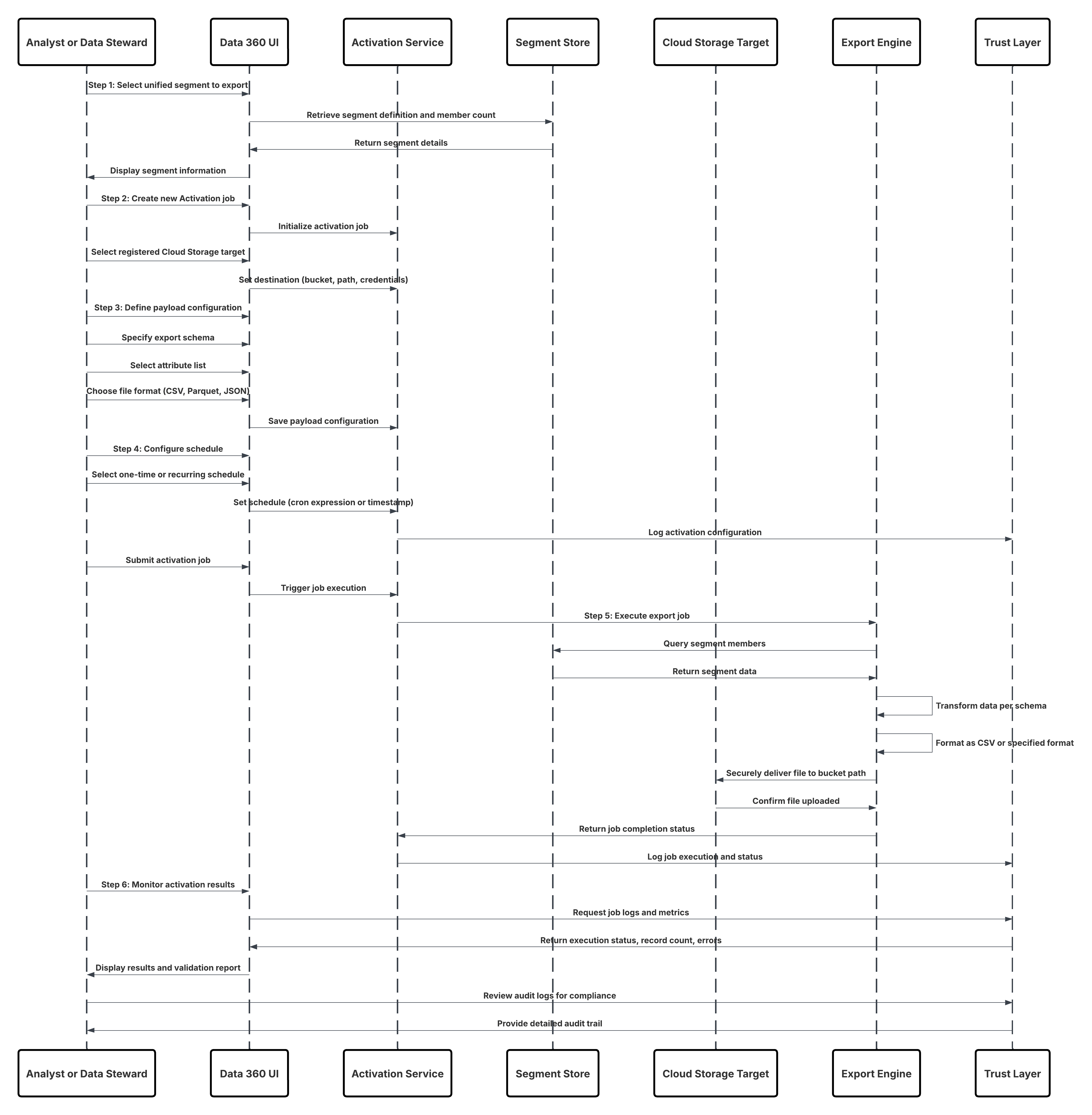

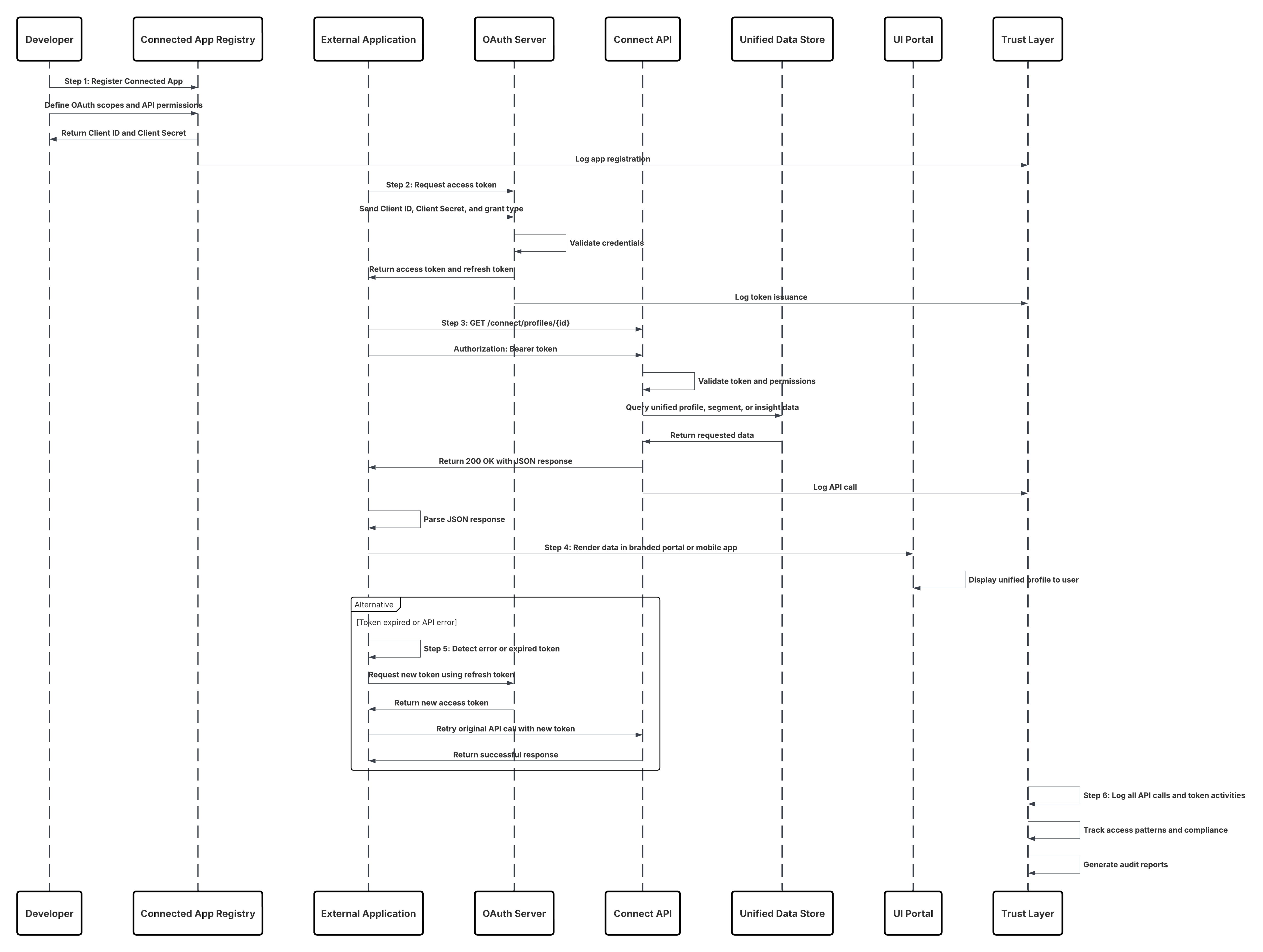

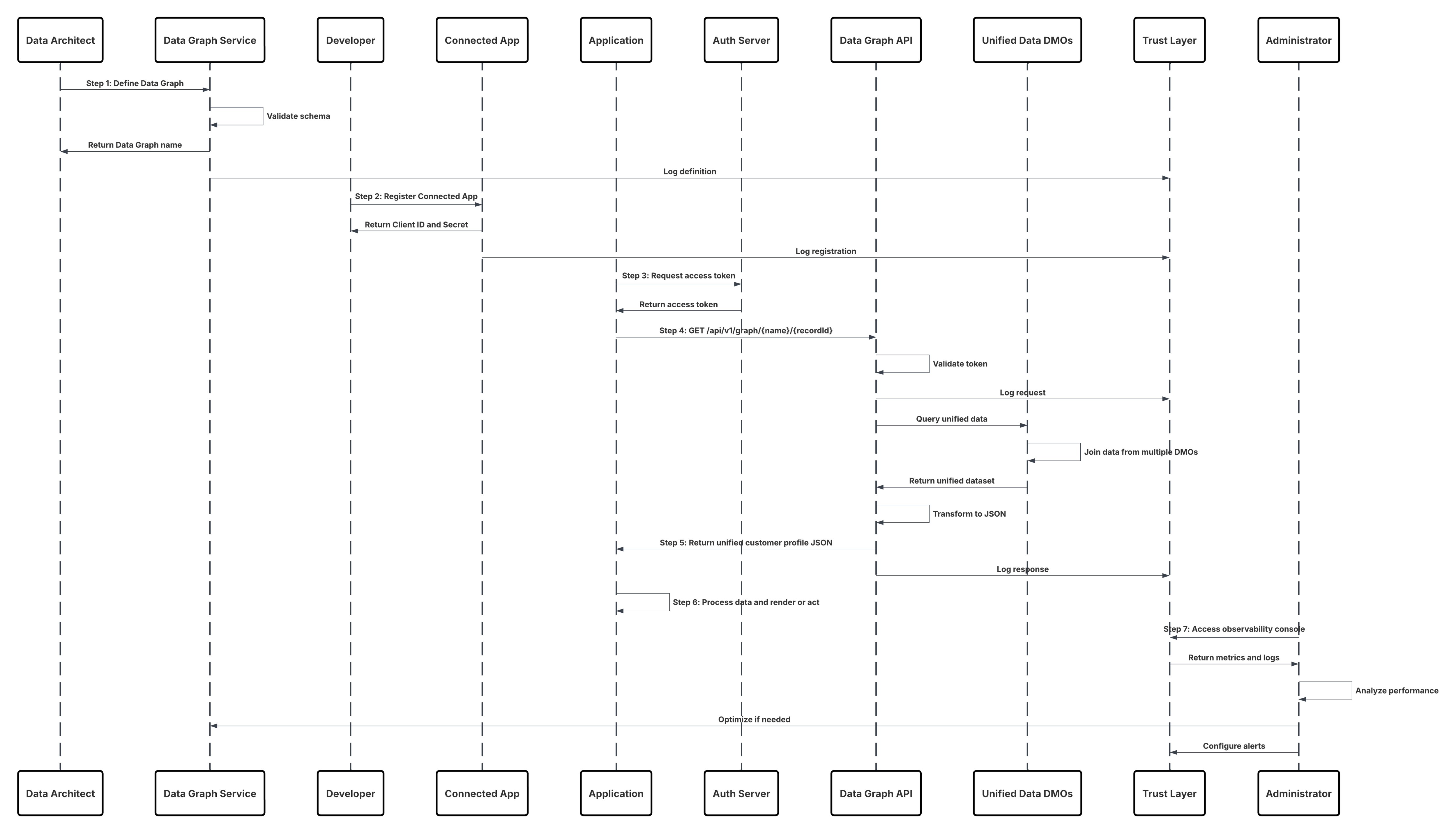

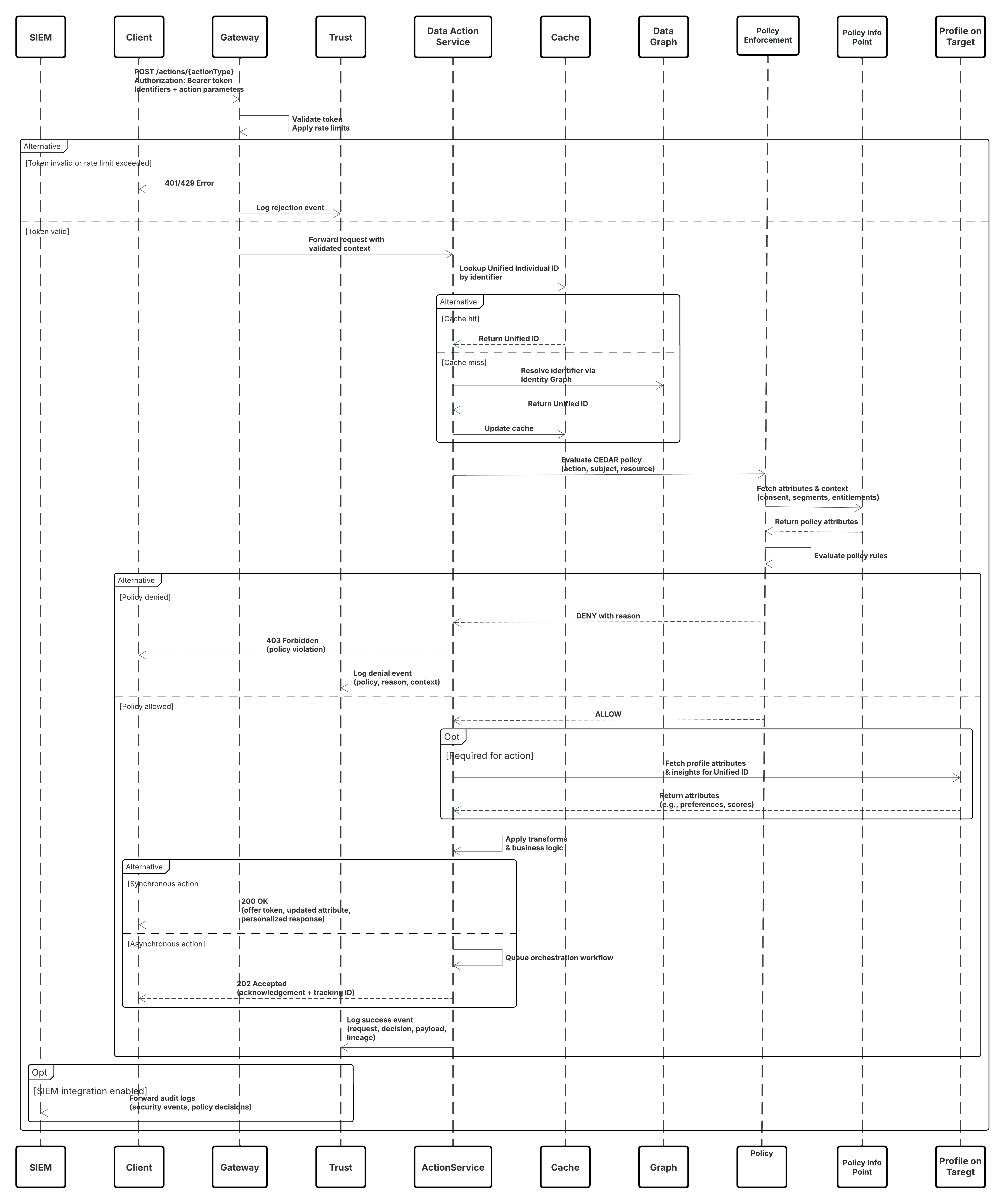

- Sketch: A UML sequence diagram that shows you how the solution addresses the scenario.

- Results: Explains the details of how to apply the solution to your integration scenario and how it resolves the forces associated with that scenario. This section also contains new challenges that can arise as a result of applying the pattern.

- Sidebars: Additional sections related to the pattern that contain key technical issues, variations of the pattern, pattern-specific concerns, and so on.

- Example: An Real world scenario that describes how the design pattern is used in a real-world Salesforce scenario. The example explains the integration goals and how to implement the pattern to achieve those goals.

Use this table as a table of contents for the integration patterns contained in this document.

| Pattern level1 | Pattern level2 | Pattern | Secenario |

|---|---|---|---|

| Data Ingestion Patterns--Data Inbound | Batch Ingestion Patterns | Bulk Data Ingestion from Cloud Storage | Data is ingested from an enterprise cloud storage source such as Amazon S3, Azure Blob, or Google Cloud Storage into Data 360 in the form of large batches of raw data (e.g., transactions or product logs). |

| Bulk Data Ingestion from Salesforce Clouds | Data 360 receives CRM data in bulk from multiple Salesforce orgs (e.g., Sales Cloud, Service Cloud) to build unified customer profiles. | ||

| Streaming and Real Time Ingestion Patterns | Event Driven Ingestion via Ingestion API--Streaming | Data 360 subscribes to streaming ingestion endpoints that receive continuous event payloads (e.g., purchase events, IoT telemetry) from enterprise systems for real-time profile updates. | |

| Real Time Web and Mobile Behavior Ingestion | Data 360 collects and processes real-time website and mobile app behavioral data through SDKs to enrich engagement metrics and personalization models. | ||

| Near Real Time CRM Synchronization via Streaming | Data 360 receives CRM data updates (e.g., contact, case, opportunity changes) in nearReal-Time via event streams to maintain a continuously synchronized Customer 360 view. | ||

| Event Stream Ingestion from Cloud Messaging Platforms--Kinesis and MSK | Data 360 consumes streaming data directly from cloud event platforms like AWS Kinesis or Kafka (MSK) to process high-frequency operational or IoT events. | ||

| Zero Copy Patterns--Inbound and Outbound | Inbound Zero Copy--External Platforms to Data 360 | Data 360 queries external datasets (e.g., Snowflake, BigQuery) on demand through Zero Copy Ingestion, without physically moving data into Salesforce. | |

| Outbound Zero Copy--Data 360 to External Platforms | External systems such as Databricks or Tableau access enriched segments and insights in Data 360 via Zero Copy Outbound connections without data replication. | ||

| Unified Multi Org Data Platform with Data Cloud One | Data Cloud One unifies multiple Salesforce orgs and external data sources under a shared metadata and semantic model, providing a consistent Customer 360 without data duplication. | ||

| Data Activation Patterns--Data Outbound | Batch Activation Patterns | Segment Activation to Marketing and Advertising Platforms | Data 360 activates curated customer segments directly into Marketing Cloud, Meta, Google Ads, or other ad platforms for personalized campaign delivery |

| Data Export to Cloud Storage | Data 360 exports unified or filtered datasets (e.g., consented customer records) as CSV or Parquet files to enterprise cloud storage for analytics or compliance archiving. | ||

| On Demand API Based Activation | Custom Application Integration via Connect API | External applications invoke the Data 360 Connect API inReal-Time to retrieve or trigger personalized actions (e.g., loyalty balance check or AI offer generation) based on current customer data.Custom-built web or mobile applications retrieve harmonized Data 360 insights securely via the Connect REST API to display within enterprise or partner UIs. | |

| Complete Customer Profile Retrieval via Data Graph API | A system retrieves a customer’s unified profile using the Data Graph API, returning a pre-joined, real-time JSON representation of the complete 360° view for decisioning or personalization. | ||

| Real Time Data Actions | Real Time Data Action Turning Customer Signals into Instant Action | Data 360 detects and processes a live event (e.g., consent update, purchase trigger) and immediately invokes a connected system or Salesforce Flow for downstream action.A customer activity signal (e.g., churn risk detected) in Data 360 triggers an instant real-time action — such as updating CRM, invoking Einstein scoring, or launching a retention journey. |

The integration patterns in this document are classified into three categories: data, process, and visual integrations.

Data integration patterns in Data 360 address the movement and synchronization of data across systems to ensure that both Data 360 and external platforms hold consistent, timely, and trustworthy information. These patterns typically handle large-scale, high-volume data flows and rely on governed pipelines that enforce schema consistency, lineage tracking, and mastering rules.

- Batch Ingestion Patterns represent the foundational layer of enterprise data onboarding. Bulk data ingestion from cloud storage services such as AWS S3, Azure Blob, or Google Cloud Storage allows large historical datasets to be loaded periodically into Data 360 for identity resolution, segmentation, and analytics. Similarly, bulk ingestion from Salesforce Clouds—such as Sales, Service, or Marketing Cloud—uses native connectors and Data Streams to bring CRM data into the unified data layer, ensuring harmonization and continuity across systems of engagement.

- Streaming and Real-Time Ingestion Patterns extend this by capturing high-velocity event data. Event-driven ingestion via the Ingestion API enables external systems to continuously stream customer activity into Data 360. Real-time web and mobile behavior ingestion captures clickstream and interaction data directly from digital touchpoints to drive immediate personalization. Near-real-time CRM synchronization through Streaming APIs ensures customer attributes and consent updates are reflected quickly across systems. Event stream ingestion from messaging platforms like Amazon Kinesis or Confluent MSK supports continuous high-throughput pipelines, aligning Data 360 with enterprise event architectures.

- Unified Multi-Org Data Platform with Data Cloud One further exemplifies data integration, providing a consolidated environment to unify data from multiple Salesforce orgs and external sources under a common governance and semantic layer. This enables organizations to achieve enterprise-wide data consistency, shared data models, and scalable analytics.

- In the activation phase, Batch Activation Patterns follow the same data integration principle. Segments curated in Data 360 are exported in scheduled jobs to downstream marketing and advertising platforms—such as Marketing Cloud, Meta Ads, or Google Ads—where they trigger campaign execution. Similarly, datasets can be exported to cloud storage targets to fuel external analytics and data science pipelines. Across all of these use cases, Data 360 acts as the source of truth for synchronized and curated customer data.

Process integration patterns in Data 360 involve triggering or orchestrating business processes across systems in nearReal-Time. These patterns allow Data 360 to actively participate in enterprise workflows, enabling contextual responses and dynamic data activation.

- On-demand API-Based activation enables real-time engagement scenarios. Through the Connect API, custom applications can query or activate customer profiles directly from Data 360 as part of operational processes—such as an agent console retrieving unified profile insights during a customer interaction. The Complete Customer Profile Retrieval via Data Graph API supports composite applications and microservices that rely on API-driven access to the full entity graph of a customer, allowing for dynamic experiences without pre-staged segments.

- Real-Time Data Actions push this integration approach further by enabling immediate responsiveness. When a customer signal—such as a purchase, form submission, or threshold event—is detected, Data 360 can trigger actions such as updating a CRM record, invoking an external webhook, or launching a personalized offer workflow. These patterns embody true process orchestration, bridging real-time customer intelligence with automated operational execution.

Virtual integration patterns in Data 360 enable live access to external or federated data sources without physically copying or duplicating data. These are critical for enterprises that require governed, up-to-date information at query time while minimizing data movement.

- Inbound Zero Copy Data Federation(External Platforms-to-Data 360) allows external systems—such as data warehouses or data lakes—to share datasets with Data 360 through secure, governed connections (e.g., Snowflake Secure Data Sharing). This ensures Data 360 can access and operate on external data virtually, preserving freshness and eliminating unnecessary replication.

- Outbound Zero Copy Data Sharing(Data 360-to-External Platforms) enables Data 360 to expose curated datasets for external consumption, such as AI modeling, business intelligence, or advanced analytics, through secure data federation and live query mechanisms.

Choosing the best integration strategy for your system isn’t trivial. There are many aspects to consider and many tools that can be used, with some tools being more appropriate than others for certain tasks. Each pattern addresses specific critical areas including the capabilities of each of the systems, volume of data, failure handling, and transactionality.

When selecting an integration pattern, start by answering two fundamental questions that shape the overall design and behavior of the integration. What are you integrating? — Process, Data, or Virtual access This dimension defines the primary purpose of the integration.

- Process integrations focus on orchestrating business workflows and coordinating actions across systems.

- Data integrations focus on synchronizing, enriching, or propagating data between systems.

- Virtual integrations focus on accessing external data inReal-Time without copying or persisting it in Salesforce or Data 360.

Understanding this intent helps determine the level of orchestration, data movement, and coupling required between systems.

- How does it need to execute? — Synchronous or Asynchronous method defines the execution model of the integration.

- Synchronous integrations are real-time and blocking, where the caller expects an immediate response—commonly used for user-driven or validation scenarios.

- Asynchronous integrations are non-blocking and decoupled, designed to handle scale, long-running processes, retries, and high data volumes.

Together, these two dimensions—integration intent and execution timing—provide a clear, consistent framework for selecting the right integration pattern while balancing user experience, scalability, and operational resilience. Note: An integration can require an external middleware or integration solution (for example, Enterprise Service Bus) depending on which aspects apply to your integration scenario.

This table lists the patterns and their key aspects to help you determine which pattern best fits your requirements when your integration is from Salesforce to another system

| Type | Timing | Outbound Considerations |

|---|---|---|

| Data Integration | Asynchronous | Segment Activation to Marketing and Advertising Platforms |

| Process/Data Integration | Synchronous | Custom Application Integration via Connect API Complete Customer Profile Retrieval via Data Graph API |

| Data Integration | Synchronous | Real Time Data Action Turning Customer Signals into Instant Action |

| Virtual Integration (Using Zero Copy) | Asynchronous | Outbound Zero Copy--Data 360 to External Platforms |

| Virtual Integration | Asynchronous | Unified Multi Org Data Platform with Data Cloud One |

This table lists the patterns and their key aspects to help you determine the pattern that best fits your requirements when your integration is from another system to Salesforce.

| Type | Timing | Inbound Considerations |

|---|---|---|

| Data Integration | Asynchronous | Bulk Data Ingestion from Cloud Storage Bulk Data Ingestion from Salesforce Clouds |

| Data Integration | Asynchronous | Event Stream Ingestion from Cloud Messaging Platforms--Kinesis and MSK Near Real Time CRM Synchronization via Streaming |

| Process Integration | Synchronous | Event Driven Ingestion via Ingestion API--Streaming Real Time Web and Mobile Behavior Ingestion |

| Virtual Integration | Asynchronous | Inbound Zero Copy--External Platforms to Data 360 |

This table lists some key terms related to middleware and their definitions with regards to these patterns.

| Term | Definition |

|---|---|

| Event Handling | Event handling refers to receiving, routing, and responding to identifiable occurrences from a source system or application. Middleware handles events by identifying the target endpoint, forwarding the event, and triggering the required business action (e.g., logging, retries, or activating downstream services). In Data 360 architectures, event handling is critical for real-time data ingestion, activation triggers, and publish/subscribe patterns. Events may originate from external systems (Kafka, AWS Kinesis) or Salesforce Platform Events and are routed by middleware or the Data 360 event bus for immediate processing. |

| Protocol Conversion | Protocol conversion allows communication between systems using different data transport standards. Middleware translates proprietary or legacy protocols (like AMQP, MQTT, FTP) into supported Data 360 protocols (REST, gRPC, or HTTPS). This ensures interoperability across heterogeneous systems. Since Data 360 doesn’t natively handle protocol conversion, middleware provides the adaptation layer, encapsulating or transforming messages into a format Data 360 APIs and connectors can interpret. |

| Translation and Transformation | Translation and transformation ensure interoperability by mapping one data format or schema to another. Middleware performs these transformations to align diverse data payloads (CSV, XML, JSON) with Data 360 data model objects (DMOs) and Unified Data Layer Objects (UDLOs). This includes cleansing, enriching, and applying semantic or ontology-based mapping before ingestion. While Salesforce offers transformation tools like Data Prep Recipes, complex transformations (especially for semantic harmonization) are best handled in middleware. |

| Queuing and Buffering | Queuing and buffering rely on asynchronous message passing to ensure resilient, decoupled communication. Middleware platforms (e.g., MuleSoft, Kafka, or Azure Event Hub) provide persistent queues that temporarily store data when Data 360 or downstream systems are busy or unreachable. This prevents data loss and supports near-real-time ingestion or activation retries. Data 360 supports streaming ingestion and flow-based outbound messaging, but durable queuing and guaranteed delivery are typically handled by middleware. |

| Synchronous Transport Protocols | Synchronous transport protocols involve blocking, real-time request/response operations. The sender waits for a reply before proceeding. In Data 360, these are used for on-demand API-based activations, real-time enrichment, or profile lookups, where responses are required immediately. Middleware ensures connection reliability and manages retries or fallback handling for synchronous Data 360 API calls. |

| Asynchronous Transport Protocols | Asynchronous transport protocols support non-blocking, decoupled communication where the sender continues processing without waiting for a response. Middleware handles asynchronous flows for batch activations, streaming ingestion, and event-driven activation. This allows high throughput and resilience — critical for event streaming and near-real-time data ingestion patterns in Data 360. |

| Mediation Routing | Mediation routing defines complex message flow between systems, ensuring that the right data or event reaches the correct consumer. Middleware acts as the mediator, handling routing logic based on rules, headers, content, or event type. In Data 360 integrations, mediation ensures events and profile updates from multiple systems are properly routed to Data Ingestion APIs, activation endpoints, or external consumers. This simplifies orchestration and supports multi-system data synchronization. |

| Process Choreography and Service Orchestration | Process choreography and orchestration coordinate multi-system processes. Choreography supports autonomous, asynchronous event-driven flows, where systems act based on shared rules without a central controller. Orchestration is a centrally managed flow that directs service execution. In Data 360 architectures, middleware manages orchestration for ingestion and activation across systems, while Salesforce workflows or Flows handle lightweight choreographies within the platform. Complex orchestration, requiring transaction coordination or state management, is recommended at the middleware layer. |

| Transactionality (Encryption, Signing, Reliable Delivery, Transaction Management) | Transactionality ensures atomic, consistent, isolated, and durable (ACID) operations across systems. Salesforce and Data 360 are transactional within their boundaries but don’t support distributed transactions across external systems. Middleware handles global transaction control, including encryption, message signing, rollback, compensation, and reliable delivery. For mission-critical data flows (e.g., financial or consent updates), middleware ensures end-to-end integrity and recovery across Data 360 and external systems. |

| Routing | Routing specifies the controlled flow of messages or data between components. It may be based on headers, content type, priority, or rules. Middleware handles routing logic for events and activations involving Data 360, such as directing enriched audience segments to different downstream systems (ad platforms, warehouses, CRM apps). Although routing can be implemented within Salesforce (Apex, Flows), middleware routing is preferred for scalability, flexibility, and governance. |

| Extract, Transform, and Load (ETL) | ETL involves extracting data from source systems, transforming it into a consistent schema, and loading it into a target (like Data 360). Middleware or ETL tools handle these operations before data ingestion. Data 360 can receive ETL outputs via APIs, connectors, or bulk ingestion pipelines, and also supports Change Data Capture (CDC) for near-real-time synchronization. Middleware ETL processes are essential for integrating legacy systems and ensuring data quality before unification in Data 360. |

| Long Polling | Long polling (Comet programming) is a method for maintaining open communication between systems for real-time updates. The client sends a request and the server holds it until an event occurs, then responds and reopens a new connection. Salesforce uses this in the Streaming API and CometD/Bayeux protocols for event-driven data synchronization. Middleware can subscribe to these events and forward them to Data 360 for real-time ingestion or activation triggers, ensuring minimal latency across enterprise systems. |

Data ingestion is the first and most critical step in Salesforce Data 360’s data lifecycle. It’s how raw information from multiple external systems—CRM, ERP, web, mobile, or third-party APIs—enters the platform and becomes part of a unified customer view. The right ingestion pattern depends on what the business needs:

- Data volume — how much data is moving at once

- Latency — how fresh the data must be

- Source system capabilities — how the system can connect and deliver data

Data 360 supports multiple ingestion modes to meet these needs: batch for high-volume loads, streaming for near real-time updates, event-based for transactional immediacy, and Zero Copy ingestion for instant access to external data without physically moving it. Together, these patterns ensure that every customer signal—whether it’s a purchase event, a clickstream log, or a loyalty update—flows into Data 360 efficiently, securely, and in the right time frame to power trusted analytics and AI-driven experiences.

Batch ingestion patterns are the backbone of large-scale data onboarding in Data 360. They are optimized for scenarios where data is processed in bulk—typically on a scheduled or periodic basis—rather than continuously. These patterns are best suited for:

- Historical data loads to initialize the platform with existing enterprise records

- Regular synchronization with systems of record such as ERPs, data warehouses, or proprietary databases

- Use cases where real-time freshness is not critical, but consistency, completeness, and auditability are

Batch ingestion offers predictable performance and operational simplicity, making it a trusted choice for enterprises managing terabytes of structured or semi-structured data. Data 360 provides a set of production-ready, generally available connectors that support batch ingestion natively. These connectors streamline integration setup, reduce custom ETL development, and ensure data quality and security across every import. The table below highlights the most common connectors used for enterprise-scale batch ingestion.

Context

This pattern is designed for enterprise scenarios that involve ingesting large volumes of structured data—such as CSV or Parquet files—and unstructured assets from centralized data lakes or scheduled file drops. Data sources commonly include cloud storage platforms like Amazon S3, Google Cloud Storage (GCS), and Microsoft Azure Blob Storage, where files are periodically delivered as part of upstream data pipelines or batch exports.

Problem

How can an organization establish a reliable, secure, and high-throughput process to ingest massive, file-based datasets from its primary cloud storage platform into Data 360 on a predictable, recurring schedule—without sacrificing governance, scalability, or performance?

Forces

Ingesting massive, file-based datasets into Data 360 is not a simple data transfer exercise — it’s an architectural challenge shaped by scale, governance, and platform constraints.

Data Volume and Scale : Data 360 ingestion connectors are optimized for reliability and governance, not arbitrary bulk throughput. For example, the Amazon S3 connector supports up to 100 million rows or 50 GB per object, whichever limit is reached first. For enterprises with historical datasets running into billions of records, this boundary becomes a key design constraint. A single-file, lift-and-shift approach quickly becomes infeasible, requiring intelligent data partitioning, chunking, and orchestration strategies to achieve scale without hitting connector limits.

Schema Definition and Maintenance : Data 360 requires explicit schema definitions for every ingestion pipeline to ensure semantic and structural integrity. In the case of S3 ingestion, a csv file must define column headers and a single representative data row. This file acts as the canonical contract between the source system i.e. cloud storage and Data 360. Any misalignment — in field names, data types, or encoding — can cause ingestion failures or silent data corruption. Maintaining this schema file in version control and enforcing validation through CI/CD or data governance workflows becomes a best practice for production environments.

Strict Naming Conventions : Data 360 enforces strict object and field naming rules to maintain consistency across the metadata graph.

- Object names must start with a letter and can only include letters, digits, or underscores.

- Field names must follow the same patterns.

Files that violate these conventions — for example, fields containing spaces, special characters, or unsupported symbols — will fail schema validation during ingestion. Enterprises must implement pre-ingestion data hygiene processes to sanitize and normalize incoming file structures.

Authentication and Security Posture : Each connection to external storage must comply with enterprise-grade security and compliance standards.

- Authentication is typically handled through AWS Access/Secret Keys or federated Identity Provider (IdP) authentication.

- IAM roles must be scoped to enforce least privilege, allowing only read access to the specified storage paths.

- For secure access, outbound IP addresses must be directly added to the source system’s allowlist.

These layered controls ensure that every file transfer operates within a zero-trust, auditable perimeter, balancing enterprise compliance with the flexibility required for large-scale ingestion.

Solution

This table contains solutions to this integration problem.

| Solution | Fit | Comments |

|---|---|---|

| Use Native Cloud Storage Connectors (Amazon S3, Google Cloud Storage, Azure Blob Storage) | Best | This is the recommended and most reliable pattern for large-scale, recurring file-based ingestion into Data 360\. Native connectors provide managed authentication, schema mapping, and secure data movement directly into Data 360’s Data Lake Objects (DLOs). Ideal for scheduled batch loads where latency is not critical (for example, scheduling hourly or daily). |

| Handling Large Datasets (Beyond Connector Limits) | Best with Preprocessing | Each connector enforces ingestion limits (for example, S3: 100M rows or 50 GB per object). For larger datasets, implement an ETL preprocessing step to partition data into smaller files/folders that fall under these thresholds. Then, configure multiple data streams to ingest each partition in parallel, and use the append node in a batch data transforms) within Data 360 to recombine the partitions into a unified dataset. |

| Security and Governance | Best | All connectors support secure authentication via cloud-native methods (IAM roles, service accounts, or access keys). For added control, restrict access to Data 360 IP ranges via the cloud provider’s allowlist. Data transfer occurs over encrypted channels, with files stored in a temporary, secure staging layer during ingestion. |

| When Not to Use | Suboptimal | This pattern is not optimal for:

|

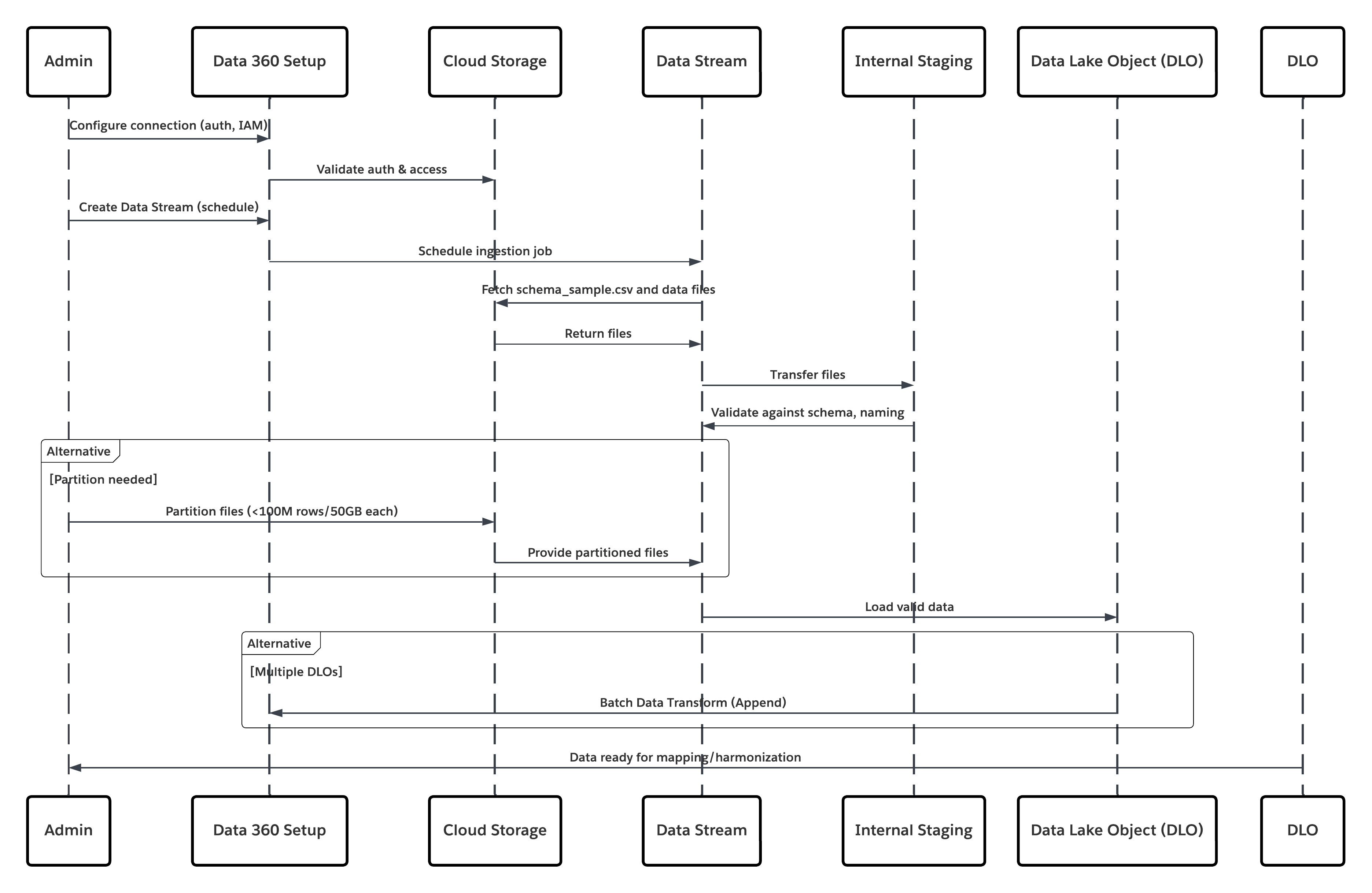

Sketch

This diagram illustrates sequence of Steps to ingest the data from cloud storage into Data 360

In this scenario:

- Admin configures a connection to cloud storage via the Data 360 Setup interface (specifying authentication, bucket details, IAM roles, and whitelisting).

- Data Cloud Setup interface authenticates with the Cloud Storage Platform, verifying credentials and access.

- Admin creates a data stream in Data 360, linking the data stream to the object/folder in Cloud Storage and defining the ingestion schedule.

- On schedule trigger, data stream requests source files (e.g., CSV, Parquet) from the Cloud Storage Platform.

- Cloud Storage Platform delivers files, including the required valid schema_sample.csv and other data files compliant with naming conventions.

- Data Stream transfers files to the Internal Staging Environment within Data 360.

- Data 360 Pipeline processes the files: Uses the schema definition from schema_sample.csv Validates structure, field names, and divides the load if above ingestion thresholds (100 million rows/50 GB per file). If large files are detected, a preprocessing partitioning step (notified to admin for next run) is carried out externally.

- Records are imported from staging into a data lake object (DLO).

- If required and data is partitioned, use the append node in a batch data transform to combine multiple DLOs.

- Data 360 logs success/failure, updates status for monitoring, and signals that data is ready for mapping, harmonization, and unification.

Results

The application of this pattern enables secure, scheduled, and large-scale ingestion of structured or unstructured files from enterprise cloud storage platforms into Data 360. The process is automated, scalable, and resilient — delivering raw data into data lake objects (DLOs) that serve as the foundation for harmonization and mapping to the Customer 360 Data Model.

Ingestion Mechanisms

The ingestion mechanism depends on the connector and scheduling strategy defined within Data 360.

| Ingestion Mechanism | Description |

|---|---|

| Native Cloud Storage Connector (Amazon S3, GCS, Azure) | Recommended for direct ingestion of files in CSV or Parquet format from the enterprise’s cloud data lake. These connectors support incremental and full refresh schedules. For example, a bank can configure a daily sync of customer transaction files from an S3 bucket to a DLO. |

| Partitioned File Strategy | For very large datasets (beyond 100 million rows or 50 GB per object), the data is partitioned into smaller logical sets (e.g., by month or region). Each partition is managed as a separate Data Stream and later recombined using a Batch Data Transform with an Append node. |

| Automated Scheduled Sync | Data 360 provides a declarative scheduler (hourly, daily, or custom cadence) that triggers ingestion jobs automatically, ensuring data freshness without manual intervention. |

Error Handling and Recovery

Error handling and recovery are critical to ensure reliability in high-volume ingestion operations.

- Error Detection: Each Data Stream run logs ingestion errors (e.g., schema mismatch, file corruption, or naming violations) in Data 360 Monitoring. Administrators can review and reprocess failed batches.

- Recovery Mechanism: Data 360 maintains checkpointing to ensure failed batches do not corrupt prior ingestions. Retries can be configured after correcting source issues (e.g., malformed CSVs).

- Schema Validation: The schema_sample.csv file defines data types and structure. Any change triggers validation to avoid silent schema drift across runs.

Idempotent Design Considerations

Ingestion is idempotent by design — reprocessing the same file does not result in duplicate records. Key strategies include:

- File Fingerprinting: Data 360 computes checksums to identify and skip previously processed files.

- Transactional Ingestion: Data is staged and only committed to the DLO upon successful processing of all records.

- Append vs. Replace: Depending on business logic, streams can append to or fully replace the target DLO; this ensures deterministic outcomes and prevents partial data overlap.

Security Considerations

Security is integral throughout the ingestion pipeline, from authentication to encryption and access control.

- Authentication & Authorization: Connectors use Salesforce’s Secure Integration Framework, leveraging Named Credentials and External Credentials for authentication without exposing secrets.

- Encryption: Data is encrypted in transit (TLS 1.2+) and at rest (AES-256).

- Network Controls: Source storage systems (e.g., S3 buckets) must allowlist Data 360 IPs.

- Compliance Alignment: Supports enterprise data protection frameworks such as GDPR, HIPAA, and FFIEC guidelines when paired with Customer-Managed Keys (CMK).

- Auditability: Every ingestion job and credential access is logged for traceability and compliance reporting.

Sidebars

Timeliness

Timeliness depends on the ingestion schedule and data volume.

- Large enterprise datasets (100M+ rows) may require partitioning for parallel ingestion.

- Typical ingestion latency ranges from minutes to a few hours, depending on file size and transformation complexity.

- For near-real-time ingestion, Data 360 Streaming or API-based connectors may complement the file-based model.

Data Volumes

- Best suited for high-volume, periodic batch ingestion.

- Each object processed through the S3 connector supports up to 100 million rows or 50 GB per file.

- For petabyte-scale systems, use data partitioning and multi-stream orchestration.

Endpoint Capability and Standards Support

The capability and standard support for the endpoint depends on the solution that you choose.

| Connector Type | Endpoint Requirements |

|---|---|

| Amazon S3 Connector | S3 bucket with appropriate IAM policy and schema\_sample.csv file defining the schema. |

| Google Cloud Storage Connector | Service account credentials and bucket access with uniform naming conventions. |

| Azure Storage Connector | Access key or SAS token–based authentication; blob or folder structure must follow Data 360 conventions. |

State Management

State is tracked through Data Streams and their last successful run timestamp.

- Data 360 automatically maintains sync states and offsets, ensuring only new or modified files are processed on subsequent runs.

- When integrating with external ETL tools, unique file identifiers (e.g., UUIDs or timestamps) are recommended to avoid duplication.

Complex Integration Scenarios

In advanced enterprise architectures, this pattern can integrate with:

- Middleware ETL Pipelines (e.g., Informatica, MuleSoft): to orchestrate pre-processing, validation, and file partitioning before handoff to Data 360.

- AI/ML Workflows: processed DLO data can be published via DMO to model training environments or RAG indexes through Data 360 Activation Targets.

- Transactional Systems: harmonized DMOs can trigger downstream updates in Salesforce CRM or external systems via Data Actions or Platform Events.

Example

A global financial institution stores customer and transaction data in an AWS S3 data lake, where partitioned Parquet files are generated nightly by region (such as US, EU, and APAC). The data architecture team configures multiple Data Streams in Data 360, each connected to a regional folder, with a shared schema_sample.csv ensuring consistent headers and data types across all partitions. Nightly ingestion schedules automatically load the data into DLOs, after which Batch Data Transforms append all regional partitions into a unified Customer_Transactions_DLO. This harmonized dataset is then mapped to the Customer 360 Data Model, enabling downstream analytics and AI activation. The approach delivers automated and reliable ingestion from the existing data lake, enforces strong authentication and encryption aligned with enterprise IT policies, and provides a scalable, modular foundation that supports future expansion and schema evolution.

Context

A primary and critical use case for Data 360 is unifying customer data across the Salesforce ecosystem. This pattern covers natively ingesting data from core Salesforce platforms — Sales Cloud and Service Cloud (collectively Salesforce CRM) and Marketing Cloud Engagement. Sources include standard and custom CRM objects (e.g., Account, Contact, Case, Opportunity) and Marketing Cloud Engagement data extensions that hold engagement events, email sends, and tracking data.

Problem

How can an organization efficiently and reliably ingest standard and custom CRM objects and Marketing Cloud Engagement data extensions into Data 360 so the data can be used to build unified customer profiles (identity resolution, Customer 360), while maintaining performance, governance, and minimal disruption to source systems?

Forces

Native connectors simplify the job, but several operational and architectural forces must be managed:

- Comprehensive Source Permissions: A dedicated connecting user (integration account) must have appropriate object- and field-level read permissions. Failure to assign required permission sets (for example, a pre-built Data 360 connector permission set) is a common cause of ingestion failure.

- Data Refresh Modes & Cost: Connectors support full and delta/incremental refresh modes. Full refreshes are heavier on performance and credits; delta extracts reduce load but depend on reliable change-tracking in the source system.

- Custom Schema & Field Mapping: CRM instances often include custom objects/fields. Accurate schema mapping and handling of custom fields (names, types) is required to avoid mapping errors or semantic drift.

- Starter Data Bundles vs. Custom Mapping: Starter Data Bundles accelerate onboarding by pre-selecting typical objects/fields, but heavily customized orgs will need bespoke stream definitions.

- Throughput & API Limits: Source org API limits and Marketing Cloud extract rates constrain how aggressively you can schedule refreshes.

- Data Hygiene & Naming Conventions: Source field names, null behavior, and data types must be normalized before ingestion to prevent downstream mapping issues.

- Security & Least Privilege: The connector relies on secure authentication and must respect least-privilege IAM patterns, auditability, and network controls.

Solution

This table contains solutions to this integration problem.

| Solution Area | Fit | Comments / Implementation Details |

|---|---|---|

| Solution Fit | Best | Use the native Salesforce CRM Connector and Marketing Cloud Engagement Connector in Data 360\. Start with Starter Data Bundles for standard use cases and accelerate onboarding. Use manual stream customization for bespoke or domain-specific data models. |

| Handling Highly Customized CRM Instances | Best with Mapping Workshop | Treat Starter Bundles as a baseline and conduct a mapping workshop to identify: Custom objects and relationships. Formula or computed fields. Managed package extensions. For heavy formula fields, compute values in a pre-stage ETL or inside Data 360 Transforms to minimize API load on source orgs. |

| When Not to Use | Suboptimal Scenarios | Avoid this pattern if: You need high-frequency or real-time event ingestion (instead, use Streaming Connectors, Platform Events, or Zero-Copy Federation). The source org has limited API capacity and cannot sustain scheduled extracts without throttling or queue delays. |

| Security & Governance | Mandatory Controls | Principle of Least Privilege - Use a dedicated integration user with minimal read access. Never use org-wide admins. Authentication — Use OAuth 2.0 and connected apps; rotate client secrets regularly and monitor refresh token usage. Audit & Traceability — Log all ingestion runs, schema changes, and connector events. Forward logs to SIEM or compliance systems for audit readiness. Data Classification — Apply PII/PHI tagging and Attribute-Based Access Control (ABAC) using CEDAR policies immediately post-ingestion to enforce masking, consent, and downstream compliance. |

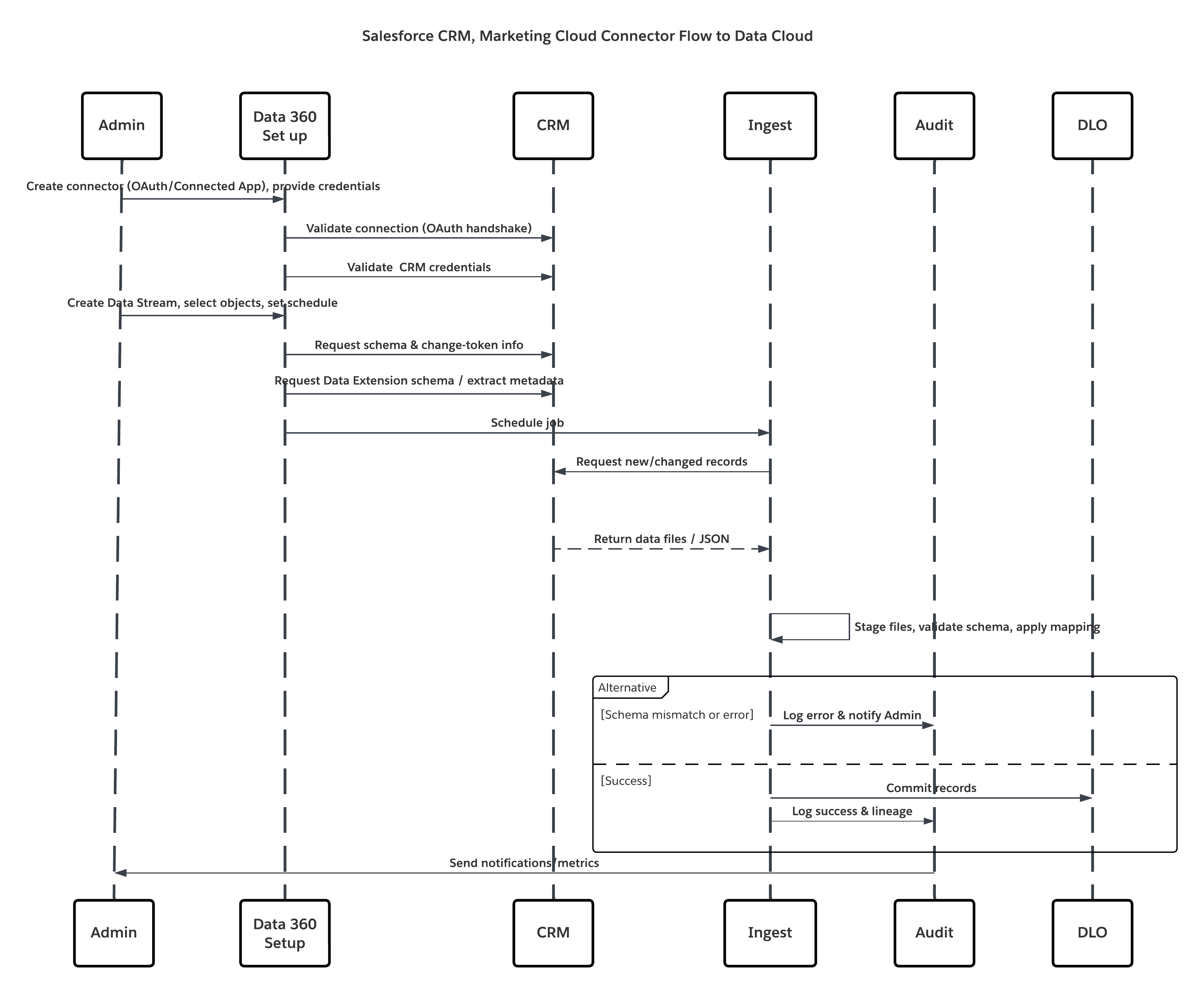

Sketch

This diagram illustrates sequence of Steps to ingest the data from cloud storage into Data 360

In this scenario:

- Admin provisions integration users and assigns connector permission sets in source org(s).

- Admin configures connectors in Data 360 Setup (connects to Salesforce CRM and Marketing Cloud via OAuth/connected app).

- Admin creates Data Streams selecting objects and Data Extensions, chooses full or delta refresh, and sets schedules.

- On scheduled run, Data 360 requests schema and delta tokens from the source(s).

- Source systems return records (delta or full payload). Marketing Cloud may deliver extracts; CRM may return JSON/Query results.

- Data 360 stages files in its internal secure staging area and validates against mapped schema.

- If validation fails, ingestion logs error, alerts admin, and halts commit. If validation succeeds, Data 360 commits records atomically to the target DLO.

- Monitoring & audit logs are updated with lineage, run duration, row counts, and credential usage. Alerts issued to admins if thresholds or errors triggered.

Results

Core customer relationship and marketing engagement data are ingested into Data 360 as Data Lake Objects (DLOs). This yields:

- Unified dataset containing profiles, cases, opportunities, and email/engagement metrics.

- Foundation for identity resolution and construction of Unified Individual Profiles.

- Operational readiness for downstream harmonization, enrichment, AI modeling, and activation while preserving governance and auditability.

Ingestion Mechanisms

The ingestion mechanism depends on the connector and scheduling strategy defined within Data 360.

| Mechanism | When to use |

|---|---|

| Salesforce CRM Connector (native) | Best for standard/custom CRM objects; supports full and delta refresh. |

| Marketing Cloud Engagement Connector (native) | Best for Data Extensions, sends, and tracking extracts; supports full/delta modes. |

| Starter Data Bundles | Accelerate onboarding for common Sales/Service/Marketing objects. |

| Custom Streams + Preprocessing | Use when complex transforms or heavy schema normalization is required. |

Error Handling and Recovery

Error handling and recovery are critical to ensure reliability in high-volume ingestion operations.

- Per-run Logs: Each Data Stream run provides success/failure details and row-level errors.

- Retries & Checkpointing: Failed runs can be retried after fixing source or schema issues; Data 360 uses staging and atomic commit semantics.

- Alerts: Configure alerts for schema drift, repeated failures, or delta-sequence gaps.

Idempotent Design Considerations

Ingestion is idempotent by design — reprocessing the same does not result in duplicate records. Key strategies include:

- Change Detection: Delta extracts rely on source system change indicators (LastModifiedDate / system change data capture). Verify the source provides reliable timestamps/flags.

- Deduplication: Use unique business keys (e.g., Contact.ExternalId) to dedupe or upsert into DLOs.

- Transactional Commit: Records are staged and only committed when batch processing completes successfully.

Security Considerations

Security is integral throughout the ingestion pipeline, from authentication to encryption and access control.

- Authentication & Authorization: Connectors use Salesforce’s Secure Integration Framework, leveraging Named Credentials and External Credentials for authentication without exposing secrets.

- Encryption: Data is encrypted in transit (TLS 1.2+) and at rest (AES-256).

- Network Controls: Source storage systems (e.g., S3 buckets) must allowlist Data 360 IPs.

- Compliance Alignment: Supports enterprise data protection frameworks such as GDPR, HIPAA, and FFIEC guidelines when paired with Customer-Managed Keys (CMK).

- Auditability: Every ingestion job and credential access is logged for traceability and compliance reporting

Sidebars

Timeliness

Timeliness depends on the ingestion schedule and data volume.

- Ideal cadence depends on business need — hourly for near-real-time marketing triggers, nightly for large reconciliations.

- Delta modes reduce load and cost; full refreshes are heavier and used for initial loads or major schema changes.

Data Volumes

- CRM connectors are optimized for transactional and mid-volume datasets (millions of records).

- For extremely large historical volumes, consider staged ETL to partition and load in stages.

Endpoint Capability and Standards Support

The capability and standard support for the endpoint depends on the solution that you choose.

| Connector | Endpoint Requirements |

|---|---|

| Salesforce CRM Connector | Source org must permit a Connected App, OAuth tokens, and a dedicated integration user with read permissions. |

| Marketing Cloud Connector | Marketing Cloud API credentials or installed package; Data Extensions must expose data via Extracts/API. |

State Management

- Connector State: Data Streams maintain last successful sync timestamps and delta offsets.

- Master Key Strategy: Prefer consistent business identifiers (external IDs) so downstream reconciliation and upserts are deterministic.

Complex Integration Scenarios

In advanced enterprise architectures, this pattern can integrate with:

- Hybrid Topologies: Combine CRM ingestion with streaming (Platform Events) for near-real-time updates.

- Middleware Orchestration: Use MuleSoft or ETL tools when complex orchestration, enrichment, or transformation pre-ingestion is required.

- Activation Feedback Loops: Harmonized DMOs can trigger downstream updates to source systems via Data Actions or platform APIs (careful with SoD controls).

Example

A multinational retailer consolidates Accounts, Contacts, Cases, Opportunities, and Marketing Cloud engagement metrics into Data 360 to create a unified customer view. The Starter Data Bundle initializes core Sales and Service objects, while the team extends the model with custom fields such as Loyalty_Membership__c and Customer_Tier__c to capture loyalty context. CRM Data Streams run hourly in delta mode, and Marketing Cloud Engagement syncs daily using delta extracts for engagement events. These datasets are processed through DLOs and identity resolution, resulting in a unified customer profile that combines CRM and engagement signals to power personalization and downstream AI models.

These patterns are built for scenarios where milliseconds matter — when customer interactions, transactions, or signals must trigger immediate insight or action. They go beyond traditional, scheduled batch ingestion to enable event-driven data flow, where information is processed the moment it’s generated. In the Salesforce Data 360 ecosystem, “real-time” isn’t a single mode — it’s a continuum of latency models. On one end lies near-real-time synchronization, where updates from systems of record (like CRM or ERP) are reflected in the Data 360 within seconds or minutes. On the other end is true real-time event capture, where client-side behavioral signals — such as clicks, purchases, or mobile interactions — are ingested and activated in milliseconds. For architects, the distinction is more than semantic. It defines how pipelines are designed, how APIs are invoked, and how downstream decisions are made. Selecting the right pattern — whether near-real-time sync or event-streaming ingestion — ensures the system meets the business’s operational latency targets while maintaining data integrity, scalability, and governance.

Context

This pattern enables any external system — such as a custom application, an Internet-of-Things (IoT) platform, a point-of-sale (POS) system, or a third-party service — to programmatically push event data into Data 360 in near-real-time as discrete events occur.

Problem

How can a developer reliably send single records or small asynchronous batches of events from an external application to Data 360 with low latency so the data is available quickly for processing, segmentation, and activation?

Forces

This pattern delivers low latency and better developer control but introduces several technical constraints and operational dependencies:

- Developer Dependency: Requires developer effort to implement authenticated REST API clients and error/retry logic — it is not a point-and-click connector.

- Strict Schema-on-Write: The Ingestion API enforces schema-on-write. A precise schema must be defined and uploaded to the connector configuration; all payloads must conform exactly or be rejected.

- Dual Interaction Modes: Same connector supports both streaming (JSON) for low-latency, record-by-record updates and bulk (CSV) for larger periodic syncs — architects must choose per use case.

- Authentication & Security: Calls must be authenticated via a Salesforce Connected App using OAuth 2.0 (e.g., JWT Bearer Flow for server-to-server). Token management, rotation, and least-privilege scopes are mandatory.

- Operational Visibility: Developers and platform teams must implement monitoring for response codes, retries, dead-letter queues, and schema drift alerts.

- Real-time Graph Requirement: For true instant activation (instant segmentation, real-time DMO mapping), the target Data Model Object (DMO) must be part of the real-time data graph; otherwise events traverse a slightly higher-latency pipeline.

Solution

This table contains solutions to this integration problem.

| Solution Area | Fit | Comments / Implementation Details |

|---|---|---|

| Solution Fit | Best for low-latency event capture | Use the Data 360 Ingestion API (streaming JSON) for pushing single events or micro-batches. Configure the Ingestion API connector with a strict OAS 3.0 schema (.yaml). Use bulk CSV ingest for larger, less frequent syncs. |

| Handling Schema Changes | Strict / Managed | Schema changes are breaking: update OAS .yaml, version the connector, and perform contract testing. Implement rolling schema migration if producers cannot change simultaneously. |

| When Not to Use | Suboptimal | Not ideal if preprocessing is needed ( ex:deduplication, guaranteed order etc.) , or for extremely large bulk loads (use native bulk connectors or batch ETL). If the source cannot produce schema-valid payloads or cannot authenticate securely, use alternative ingestion methods. |

| Security & Governance | Mandatory | Use OAuth 2.0 with least-privilege scopes, rotate keys, log token usage. Enforce TLS 1.2+, validate client IPs if required, and ensure payload PII tagging. All events must carry provenance metadata (source, timestamp, schema version, idempotency key). |

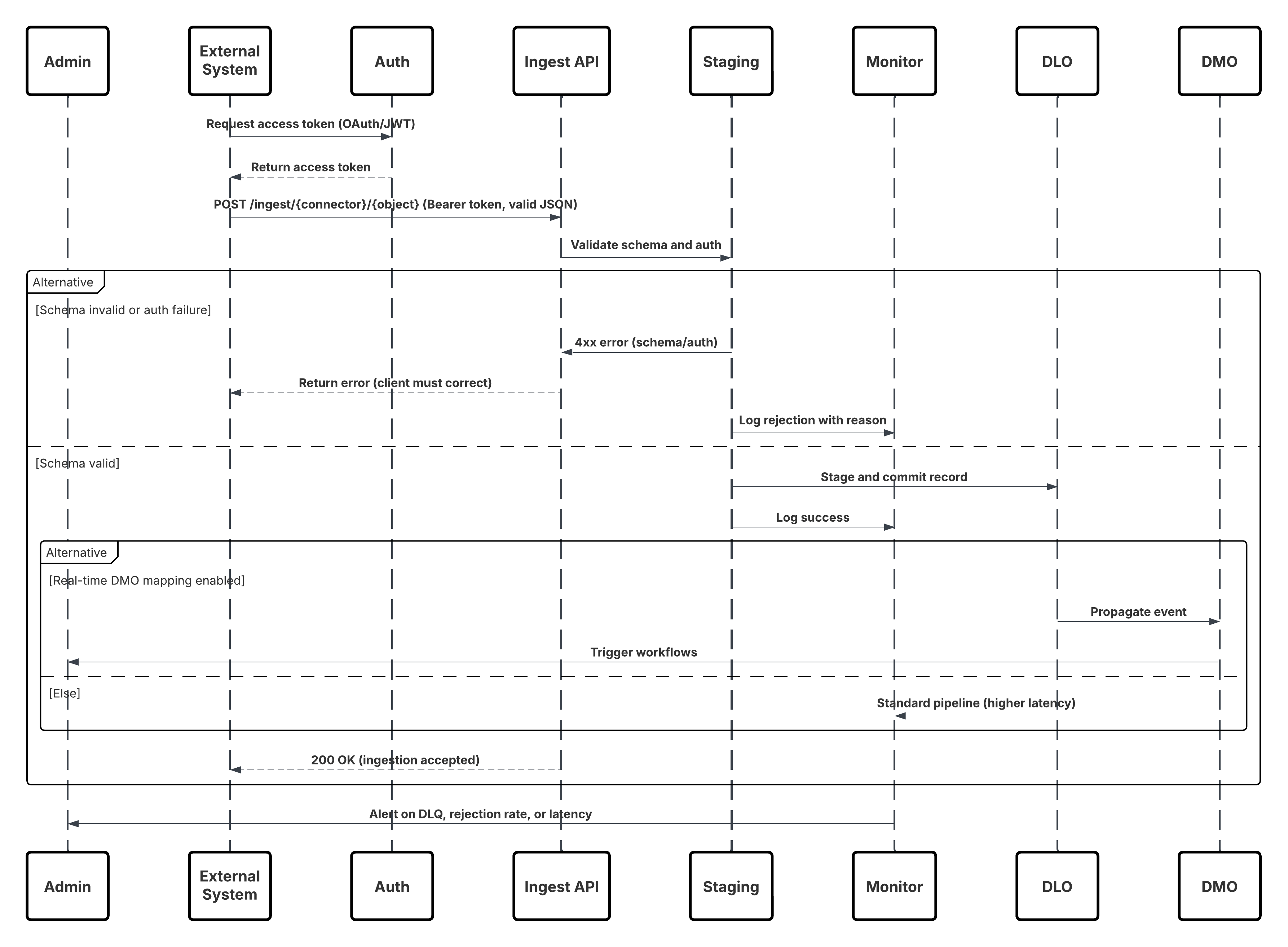

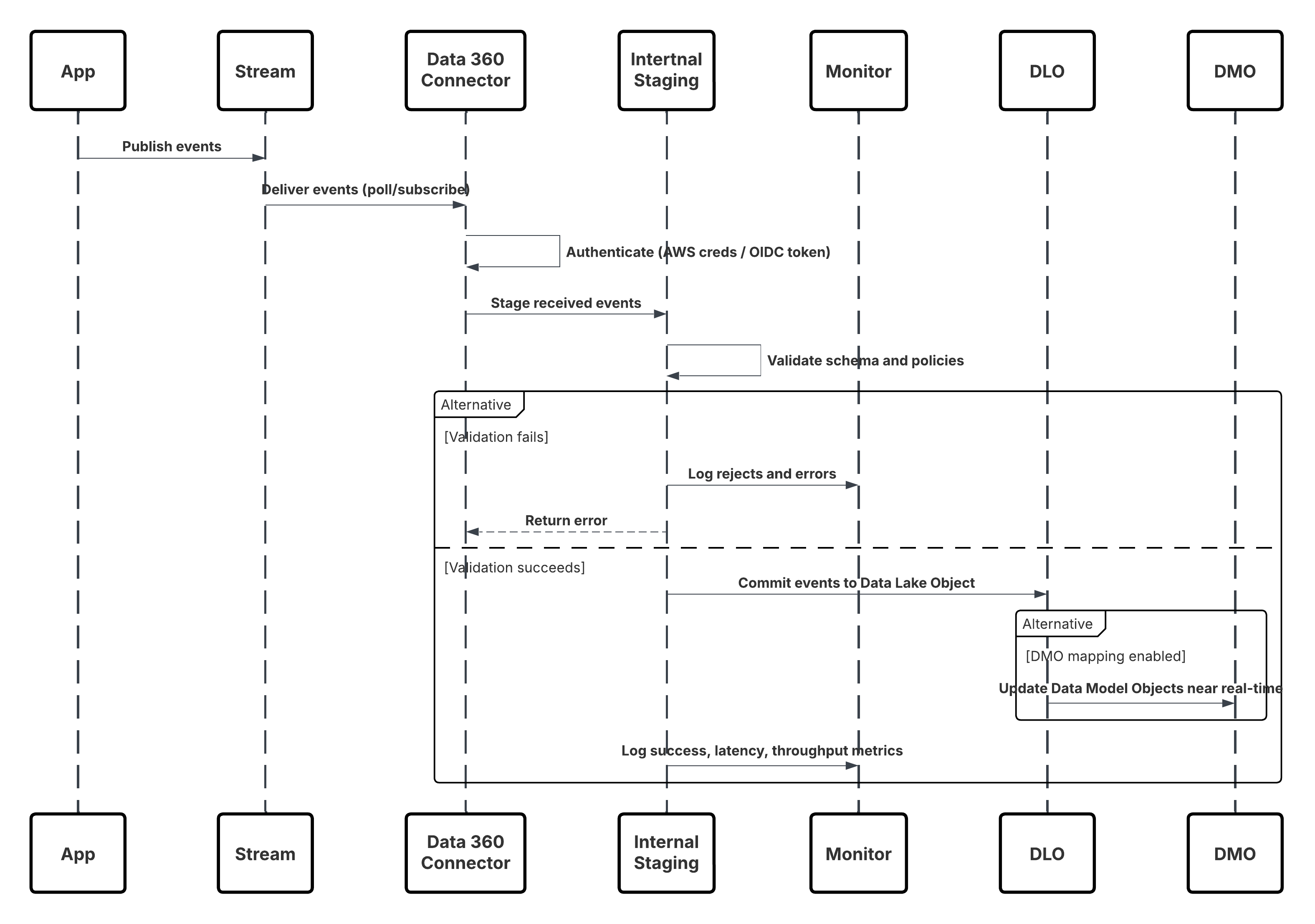

Sketch

This diagram illustrates sequence of Steps to ingest the data from Ingestion API into Data 360

In this scenario:

- External System requests authentication via OAuth/JWT from Auth Server.

- Auth Server returns access token to External System.

- External System sends data ingestion POST request to Data 360 Ingestion API with authorization and JSON payload.

- Ingestion API validates request schema and authentication via Staging & Validation module.

- On schema/authentication failure:

- Error returned to External System.

- Rejection logged for monitoring and alerting.

- On successful validation:

- Records staged and committed into Data Lake Object (DLO).

- Success logged for monitoring.

- If enabled, data propagated to Real-Time Data Graph (DMO) triggering downstream workflows.

- Otherwise, data processed via standard batch or higher-latency pipeline.

- Ingestion API confirms success to External System.

- Monitoring components alert Admin on dead-letter queues, rejection rates, or latency issues.

Results

External event data is ingested into Data 360 DLOs with low latency. When the target DMO is part of the real-time graph, the data is available for instant segmentation, agent workflows, AI models, and operational activation. This enables rapid business responses to events originating from any connected system.

Ingestion Mechanisms

The ingestion mechanism depends on the connector and scheduling strategy defined within Data 360.

| Mechanism | When to use |

|---|---|

| Streaming JSON (Ingestion API) | Single events, micro-batches, behavioral events, clickstreams, IoT telemetry — when low latency is required. |

| Bulk CSV (Ingestion API bulk mode) | Larger, periodic uploads where latency requirements are relaxed. |

| Edge / Middleware | Use when you need validation, transformation, enrichment, or rate-limiting before pushing to the Ingestion API. |

Error Handling and Recovery

- Immediate (sync) errors: 4xx responses for schema/auth errors — client must fix payload or token and retry.

- Transient (async) failures: 5xx responses — client retries with exponential backoff and jitter.

- Dead-Letter Queue (DLQ): Persistent failures land in DLQ for manual inspection and replay.

- Monitoring: Track schema rejection rate, authentication failures, latency percentiles, and DLQ backlog. Alert on thresholds.

Idempotent Design Considerations

- Idempotency Key: Every event should include a unique idempotency key/message ID.

- Upsert Strategy: Use business keys (ExternalId) to avoid duplicates on replays.

- Dedup Window: Architect should define deduplication windows and retention for idempotency tracking.

Security Considerations

Security is integral throughout the ingestion pipeline, from authentication to encryption and access control.

- Authentication: OAuth 2.0 (JWT Bearer) recommended for server-to-server. Limit scopes to only ingestion.

- Encryption: TLS 1.2+ for transport; Data 360 enforces encryption at rest.

- Least Privilege: Connected App credentials have minimal rights; rotate secrets and instrument access logs.

- Payload Governance: Include consent/consumption flags in event metadata; apply ABAC/CEDAR policies immediately after ingestion.

- IP Controls / Private Connect: Where required, restrict access via allowlists or use Private Connect for private networking.

Sidebars

Timeliness

Timeliness depends on the ingestion schedule and data volume. Streaming JSON yields sub-second to second latency depending on processing and graph configuration. Bulk CSV is minutes to hours. Choose based on business SLAs.

Data Volumes

Individual event sizes should be small (< few KB). For high-throughput producers, consider batching at the producer or using a streaming buffer (Kafka/Kinesis) before calling the API.

State Management

- Schema Versioning: Maintain schema version in event metadata and use connector versioning when updating OAS contract.

- Connector Offsets: Data 360 handles commit semantics; producers should track idempotency keys and last successful sequence for safe replay.

Complex Integration Scenarios

In advanced enterprise architectures, this pattern can integrate with:

- Edge Validation Layer: Use middleware to translate heterogeneous producer formats to the required OAS contract, perform rate limiting, and pre-enrichment.

- Hybrid Architectures: Combine ingestion API for events and Connectors for bulk reconciliation.

- Agent Activation: Events mapped into real-time DMOs can trigger Agentforce workflows and Einstein models for automated decisioning.

Example

A retail chain streams point-of-sale (POS) purchase events into Data 360 inReal-Time to power immediate customer engagement. Each store runs a lightweight server component that collects transactions, enriches them with location and device metadata, and securely posts JSON events using JWT Bearer OAuth with idempotency keys to prevent duplicates. An admin defines the event structure by uploading an OAS schema for point ofsale and configuring the Ingestion API connector. Incoming events are ingested into the pos_sale DLO, mapped to the Sale DMO, and added to the real-time graph. As a result, high-value purchases are detected instantly, triggering VIP workflows in Agentforce and updating customer segmentation to drive real-time personalization.

Context

This pattern enables the capture of high-volume, granular user interaction data—such as page views, button clicks, product impressions, and video plays—from websites and mobile applications in trueReal-Time. It is foundational for delivering in-the-moment personalization, where every digital interaction can dynamically influence the user experience and drive engagement.

Problem

How can an enterprise capture and process a continuous stream of behavioral events from digital properties—spanning millions of user interactions per minute—and make that data immediately available in Data 360 to power real-time segmentation, personalization, and activation?

Forces

This use case introduces several design challenges that require a purpose-built, low-latency ingestion architecture:

- Extreme Throughput : High-traffic websites or mobile apps can emit millions of events per minute. The ingestion layer must scale horizontally to handle this volume without event loss or backpressure, ensuring consistent latency under peak loads.

- Client-Side Instrumentation : Unlike server-driven integrations, this pattern depends on client-side SDKs. A JavaScript beacon (Salesforce Interactions SDK) must be embedded in each page, or a native SDK integrated into mobile apps. This requires robust client deployment, versioning, and event schema governance.

- Low-Latency Event Processing : User actions—such as “add-to-cart” or “video play”—must reach Data 360 within seconds, enabling real-time activation and contextual responses (e.g., targeted offers, personalized recommendations).

- Data Harmonization & Identity Resolution : Captured events often include anonymous identifiers (cookies, device IDs, session tokens). To power Customer 360 use cases, these must be mapped to known profiles via Data 360’s identity resolution and harmonized to the Customer 360 Data Model.

Solution

The recommended approach is to use the Salesforce Marketing Cloud Personalization Connector — a native, fully managed streaming pipeline designed for high-throughput behavioral ingestion.

| Solution Area | Fit | Comments / Implementation Details |

|---|---|---|

| SDK-based Event Capture | Best | Deploy the Salesforce Interactions SDK (web) or native SDK (mobile). These lightweight libraries capture and serialize user interactions inReal-Time, attaching metadata (session ID, timestamp, context). |

| Event Streaming Pipeline | Best | Events are sent to Marketing Cloud Personalization’s event streaming service over secure HTTPS. This layer is horizontally scalable and optimized for low-latency transmission (<2s). |

| Data 360 Integration | Best | Data 360’s Personalization Connector subscribes to the streaming feed, ingesting each event into a Data Lake Object (DLO) in nearReal-Time. |

| Data Model Mapping | Best Practice | The ingested DLO is mapped to Customer 360 Data Model Objects (DMOs). This enables linking anonymous and known users through Identity Resolution. |

| Real-Time Graph Enablement | Optional / Recommended | Include mapped DMOs in the real-time graph for instant segmentation, triggering personalized actions via Einstein or Agentforce workflows. |

| When Not to use | Suboptimal | This pattern is not ideal when: The source data is web client and events (use the Ingestion API instead). The organization lacks control over web/mobile clients. Real-time behavior tracking is not required (use batch ingestion). |

| Handling the schema Changes | Managed Evolution | Event schemas evolve as new interactions are added. SDKs should version event definitions. Backward-compatible changes (adding optional fields) are safe; breaking changes require connector reconfiguration and contract testing. |

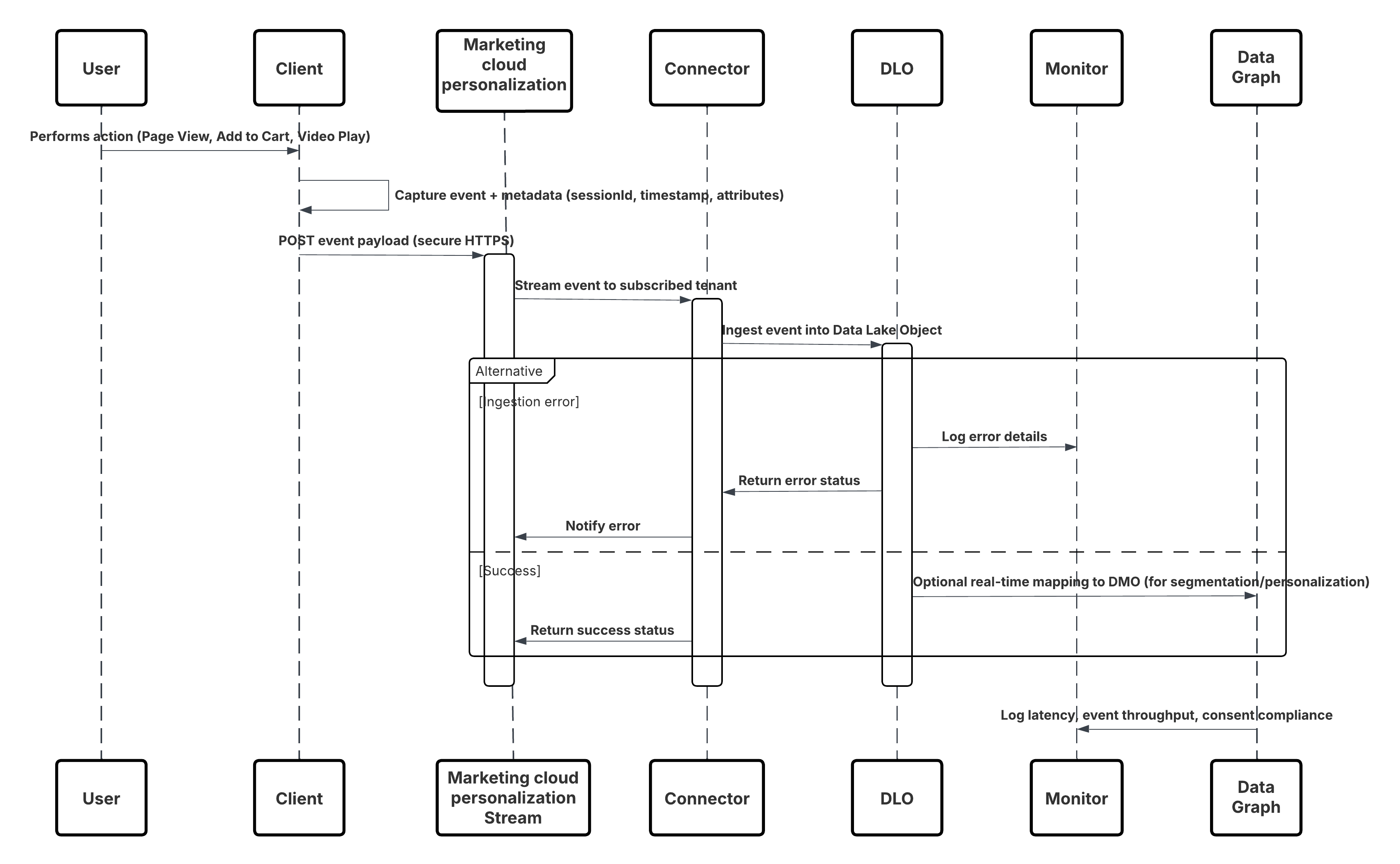

Sketch

This diagram illustrates sequence of Steps to ingest the data from Mobile and web channels into Data 360

In this scenario:

- Deploy the SDK in web or mobile channels (user-interaction capture).

- Configure SDK with tenant ID, environment, and consent controls.

- Stream captured JSON events (metadata + attributes) to Marketing Cloud streaming endpoint.

- In Data 360 Setup, create & configure the Personalization Connector for the tenant.

- Ingest events into a DLO and map the DLO → DMO inside Data 360.

- Enable the DMO in the real-time graph for immediate activation.

- Monitor latency, schema conformance, consent flags, throughput, error rates.

- Deploy to production and continually monitor.

Results

A continuous, low-latency stream of behavioral events flows from digital channels into Data 360. Within seconds, each user action becomes available for real-time segmentation, predictive modeling, or triggered personalization, enabling truly adaptive customer experiences.

Ingestion Mechanisms

The ingestion mechanism depends on the connector and scheduling strategy defined within Data 360.

| Mechanism | When to Use |

|---|---|

| Interactions SDK (Web) | Real-time capture from web browsers and SPAs. |

| Mobile SDK | Real-time capture from native mobile applications. |

| Personalization Connector | Managed subscription between Marketing Cloud and Data 360\. |

| Real-Time Graph Mapping | Enables immediate activation in Segmentation, Einstein, and Journeys. |

Error Handling and Recovery

- Layered Fault Tolerance: Implement multi-tier validation and retry mechanisms — client SDKs handle transient failures with exponential backoff, while the ingestion layer uses durable queues and replayable pipelines to prevent data loss.

- Schema & Data Governance: Version and validate event schemas continuously; invalid or evolving events are routed to a Schema Rejection or Dead-Letter Queue for safe triage and replay.

- Idempotency & Deduplication: Use stable event identifiers and upsert semantics to guarantee exact-once processing even during retries or replays.

- Monitoring & Recovery Automation: Continuous monitoring of throughput, latency, and error rates triggers automated recovery workflows — ensuring low latency, reliable delivery, and consistent real-time personalization outcomes.

Idempotent Design Considerations

- Every event must carry a unique idempotency key or message ID so duplicate submissions can be deduplicated downstream.

- Use business keys (e.g., sessionID + eventTimestamp + userID) where appropriate to identify duplicates.

- Define a deduplication window (e.g., 24 hours) during which duplicate events are ignored or filtered.

- Use upsert strategies where appropriate (e.g., update counters or flags rather than insert duplicates).

Security Considerations

Security is integral throughout the ingestion pipeline, from authentication to encryption and access control.

- Transport encryption: TLS 1.2+ for all SDK → streaming service connections.

- Data encryption at rest in Data 360 and marketing stream.

- SDK respects user consent flags (GDPR/CCPA) and suppresses tracking if consent is denied.

- Authentication of SDK traffic: ensure only approved tenants/clients can stream events.

- Metadata: each event must include source ID, timestamp, schema version, session ID, idempotency key.

- Least-privilege access: SDK endpoints and connectors limited to event ingestion scope; rotate credentials regularly.

- Data classification: Annotate PII in event payloads, enforce policies immediately post-ingestion

Sidebars

Timeliness

- Timeliness depends on end-user activity and the event streaming configuration.

- Events captured via the Salesforce Interactions SDK and delivered through the Marketing Cloud Personalization Stream typically achieve sub-second to ~2-second latency before becoming available in the Data 360 Real-Time Graph.

- This enables near-instant segmentation, personalization, and activation within active user sessions.

Data Volumes

Individual behavioral events (e.g., click, view, add-to-cart) are lightweight — generally 1–5 KB per payload. For large-scale digital properties, expect thousands to millions of events per minute. To ensure throughput and resilience:

- Use the SDK’s built-in batching and retry mechanisms for high-traffic pages.

- Offload burst handling to the Marketing Cloud streaming buffer layer.

- Monitor ingestion throughput and error ratios using the connector metrics dashboard.

State Management

Each event includes metadata for state and version control:

- Schema Versioning: Embed schema version in every event; version the Personalization Connector when updating the schema.

- Replay Handling: Events that fail due to transient network issues are retried automatically by the SDK with exponential backoff.

- Idempotency Keys: Unique identifiers (sessionId + eventType + timestamp) ensure replayed events do not create duplicates in Data 360.

- Offset Management: Data 360 tracks successful commits; any unprocessed events are re-queued by the connector until successfully ingested.

Complex Integration Scenarios

This pattern integrates seamlessly into advanced enterprise architectures:

- Edge Enrichment Layer: Add middleware (e.g., reverse proxy or serverless function) to inject additional context — such as geo, device type, or campaign metadata — before events reach Marketing Cloud.

- Hybrid(Streaming + Batch): Use the Marketing Cloud connector for real-time streams and combine it with batch ETL jobs (e.g., order data) for downstream reconciliation.

- Agent Activation: Events mapped to real-time DMOs can trigger Einstein Personalization, Agentforce workflows, or AI-driven decisioning to adapt the digital experience in the moment.

- Multi-Tenant Governance: Use consent flags and tenant-aware metadata to enforce privacy and compliance in multi-brand or multi-region environments.

Example

A global e-commerce company delivers personalized product recommendations and dynamic content to shoppers while they are actively browsing www.retailx.com, a React-based single-page application. Using the Salesforce Interactions SDK on the client side, the site captures page views, product clicks, cart actions, and video interactions inReal-Time. These events flow through the Marketing Cloud Personalization event stream and the Personalization connector into Data 360 DLOs, where they are modeled into DMOs and incorporated into the real-time graph. This architecture makes behavioral signals instantly available for segmentation, Einstein-driven personalization, and Agentforce activation, enabling responsive, in-session customer experiences

Context

For many business-critical processes, keeping Data 360 perfectly aligned with the latest updates in core CRM systems is essential. Customer service, sales, and marketing teams depend on up-to-date information to drive decisions, trigger journeys, and activate automation. This pattern provides a mechanism to synchronize changes to key Salesforce CRM objects—such as Contact, Account, and Case—into Data 360 with minimal delay, without the inefficiency or latency of frequent batch polling.

Problem

How can Data 360 maintain a near-perfectly synchronized state with key Salesforce CRM objects, ensuring that downstream analytics, segmentation, and AI-driven activation always operate on the most current data available?

Forces

This pattern introduces several technical constraints and architectural considerations:

- Event-Driven Architecture : Synchronization must be reactive—driven by change events in the CRM source org rather than periodic batch jobs.

- Selective Object Support : Not all Salesforce standard and custom objects support real-time streaming. Architects must reference the supported object list during design to avoid gaps.

- Access and Permissions : Enabling streaming requires that the integration user in the source org be assigned the “Enable Permissions for CRM Streaming” system permission.

- Data Freshness vs. Processing Cost : While near-real-time sync improves responsiveness, excessive event throughput may require horizontal scaling and robust error recovery mechanisms.

- Security and Trust Layer Integration : All event data must comply with Salesforce’s Trust and Security frameworks—encrypted in transit, validated for schema compliance, and processed within the organization’s trust boundary.

Solution

The recommended approach is to use the Salesforce CRM Connector with Streaming (Change Data Capture) enabled. When creating a data stream for a supported CRM object (e.g., Contact), administrators can toggle the “Enable Streaming” option. Under the hood, Salesforce’s Change Data Capture (CDC) platform publishes a ChangeEvent message every time a record is created, updated, deleted, or undeleted in the source CRM org. The Data 360 CRM Connector subscribes to these CDC events and applies the corresponding changes to the mapped Data Lake Object (DLO) within Data 360 in near-real time. This ensures continuous synchronization between CRM and Data 360 with minimal manual intervention.

| Solution Area | Fit | Comments / Implementation Details |

|---|---|---|

| CDC-based Streaming Connector | Best | Native Salesforce mechanism; fully managed and integrated with platform event infrastructure. |

| Event Subscription & Delivery | Best | Connector subscribes to ChangeEvent channels (e.g., /data/ContactChangeEvent) via durable replay IDs. |

| Data Mapping & Schema Evolution | Best Practice | Map streamed payloads to the corresponding DLO; handle schema versioning in metadata to prevent ingestion breaks. |

| Replay & Fault Recovery | Recommended | Utilize replay IDs and idempotency keys to avoid duplication and recover from transient errors. |

| Hybrid Mode (Streaming + Batch) | Optional | For unsupported objects or initial bulk load, use batch ingestion in combination with CDC streaming. |

| When Not to Use | Suboptimal | This pattern may be suboptimal when: The source object is not CDC-enabled. The use case does not require real-time updates (batch is sufficient). Network egress from the source org is restricted or event limits are exceeded. |

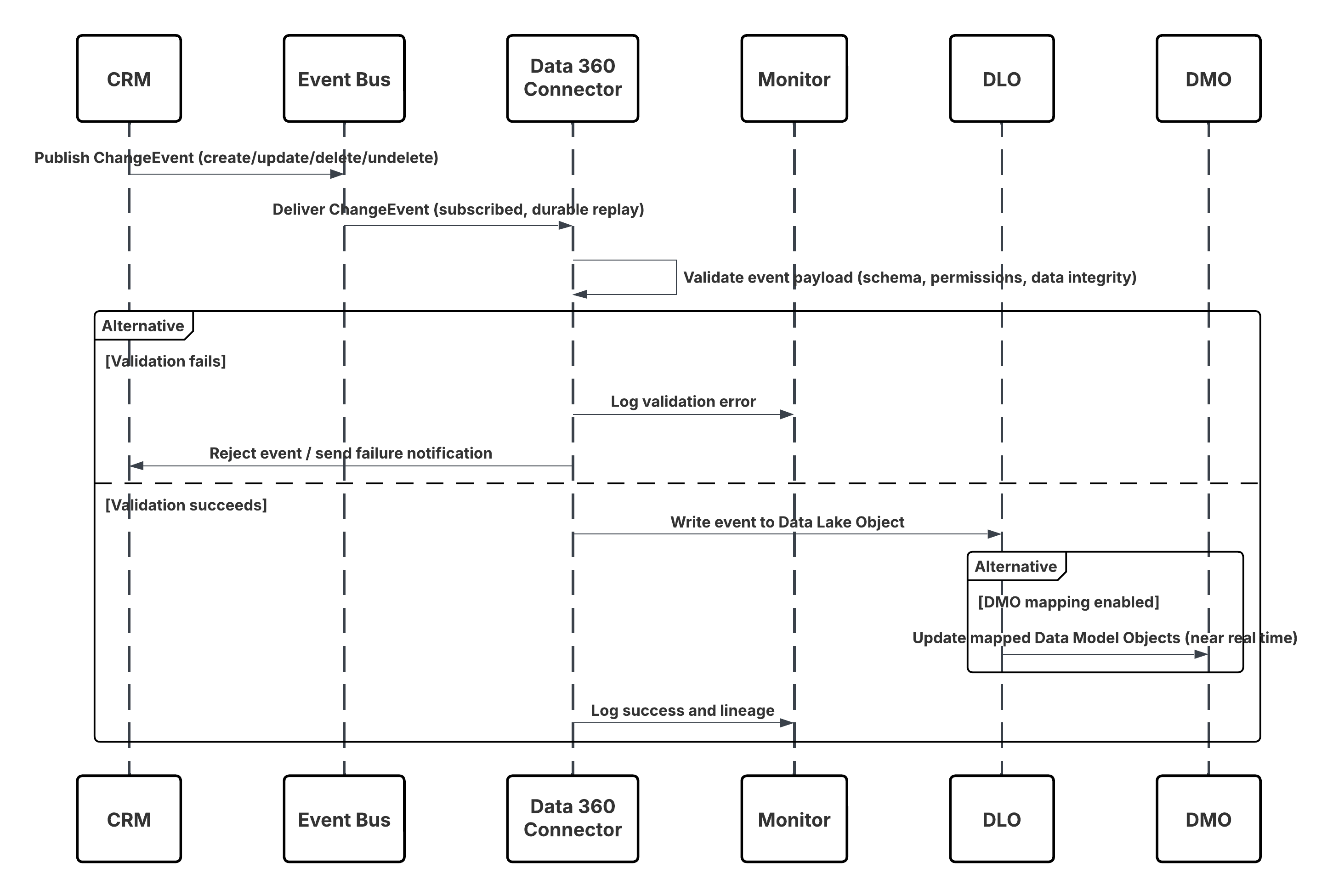

Sketch

This diagram illustrates sequence of Steps to ingest the data from CRM into Data 360 in nearReal-Time

In this scenario:

- Change occurs in Salesforce CRM (create/update/delete/undelete).

- CDC publishes a ChangeEvent to the internal Salesforce Event Bus.

- Data 360 CRM Connector subscribes to the Event Bus using a durable replay cursor.

- Event payload is validated for schema, permissions, and data integrity.

- Data 360 writes the validated event into the mapped Data Lake Object (DLO).

- If enabled, mapped Data Model Objects (DMOs) are updated in nearReal-Time, powering segmentation and activation.

Results

Data 360 maintains a continuously synchronized mirror of key CRM data. This enables:

- Real-time triggers (e.g., Journey launches when a Case is created).

- Up-to-date segmentation (e.g., move customers to the “Gold” segment upon Account status change).

- More accurate analytics and personalization based on live CRM context.

Ingestion Mechanisms

The ingestion mechanism for this pattern is natively managed through the Salesforce CRM Connector with Change Data Capture (CDC) enabled. Data 360 acts as a subscriber to the CDC event stream, ensuring reliable, low-latency synchronization between the source CRM org and Data 360.

| Mechanism | When to Use |

|---|---|

| Streaming via CDC (Preferred) | For all supported Salesforce standard and custom objects where near-real-time synchronization is required. |

| Hybrid Mode (CDC + Batch) | For objects not yet CDC-enabled, or where initial historical load is required. |

| Replay Subscription Mode | For resynchronization after downtime or deployment. |

| Error Isolation Mode | For testing and validation environments. |

Error Handling and Recovery

- Automatic Fault Recovery: The CRM Connector automatically retries transient network or platform errors using exponential backoff and routes persistent failures to the Dead-Letter Queue (DLQ) for replay.

- Schema & Auth Resilience: Schema mismatches are quarantined in the Schema Rejection Queue for admin review, while authentication or permission errors trigger controlled retries and alerting through Data 360 Monitoring.

- Guaranteed Event Continuity: Durable ReplayIDs ensure no event loss during connector downtime; missed events are rehydrated through replay windows or batch re-sync jobs.

- Data Integrity & Ordering: Built-in idempotency (RecordID + SequenceNumber) prevents duplicates, and ChangeEventHeader.sequenceNumber preserves correct event ordering for every CRM record.

Idempotent Design Considerations

- Event Uniqueness: Each CDC event carries a ReplayID and ChangeEventHeader.entityName for deterministic deduplication.

- Upsert Strategy: Implement UPSERT logic based on record ID to ensure repeated events don’t create duplicates.

- Replay Handling: Use the connector’s replay cursor to resume from the last committed offset in case of transient failures.

- Schema Evolution: Maintain a schema version in event metadata and update DLO mappings when CRM schema changes.

Security Considerations

Security is integral throughout the ingestion pipeline, from authentication to encryption and access control.

- Encryption and Trust : All events are transmitted using TLS 1.2+ and stored encrypted at rest in Data 360.

- Access Control: Only authenticated connectors with appropriate integration permissions can subscribe to CDC channels.

- Schema Validation: Every event payload is validated against the DLO schema before ingestion.

- Consent Propagation: Consent and data classification metadata flow downstream with each event to preserve privacy and compliance (GDPR, CCPA).

- Operational Governance: Events are logged, audited, and monitored for replay, schema rejection, and throughput anomalies via the Data 360 Trust Layer.

Sidebars

Timeliness

- CDC events are processed in near-real time—typically within seconds of the change in CRM.

- Latency may vary based on event volume and connector throughput, but Data 360 guarantees sub-minute availability for supported objects.

Data Volumes

- Each event payload is lightweight (~1–5 KB).

- For high-change-rate objects like Case or Contact, ensure throughput limits align with Salesforce CDC event allocations.

State Management

Each event includes metadata for state and version control:

- Replay IDs: Used for sequential event recovery.

- Schema Versioning: Maintain version metadata to manage contract changes.

- Idempotency Keys: Deduplicate replays across retries.

- Offset Commit: Data 360 persists commit state after each successful batch of events.

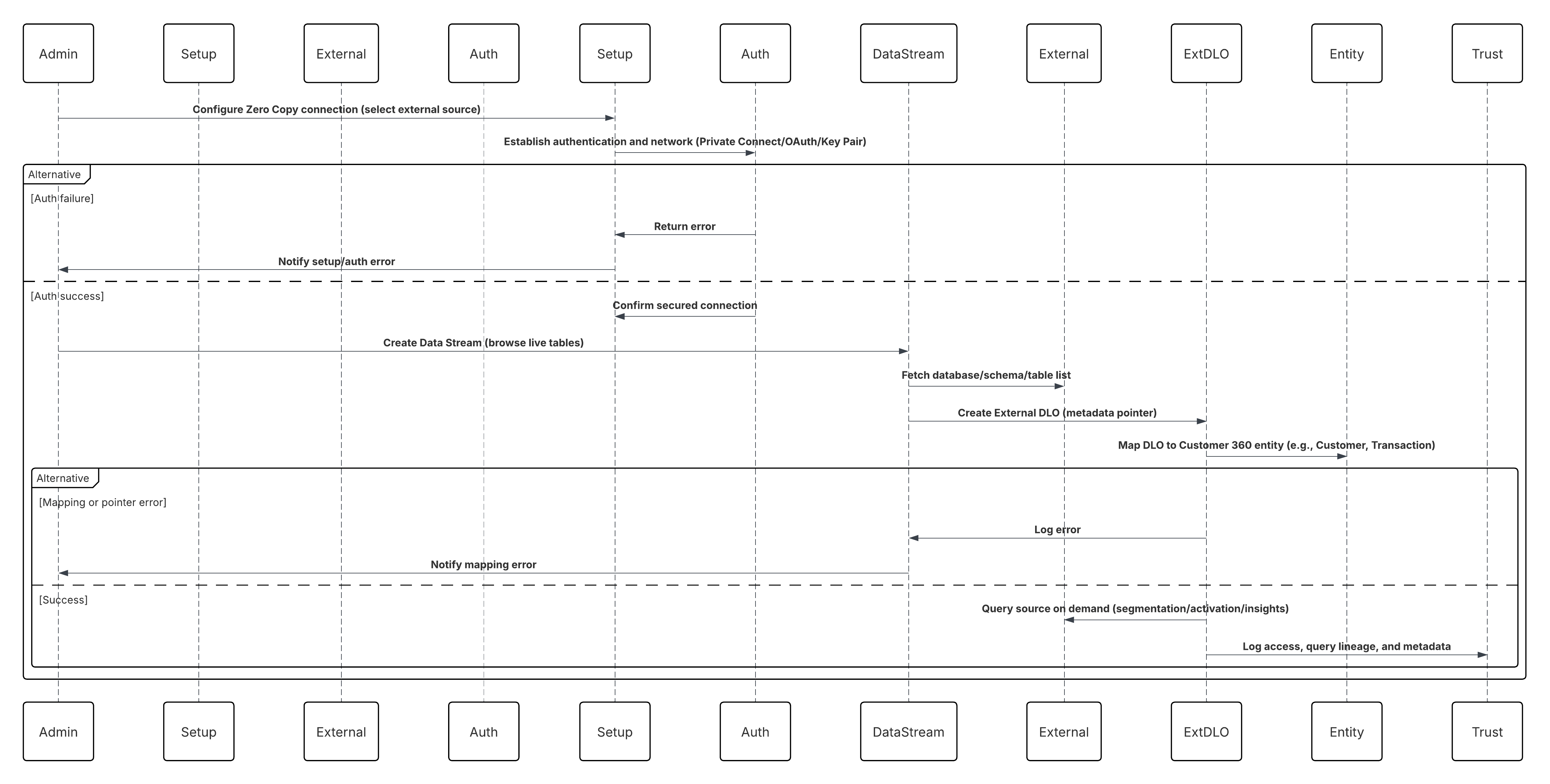

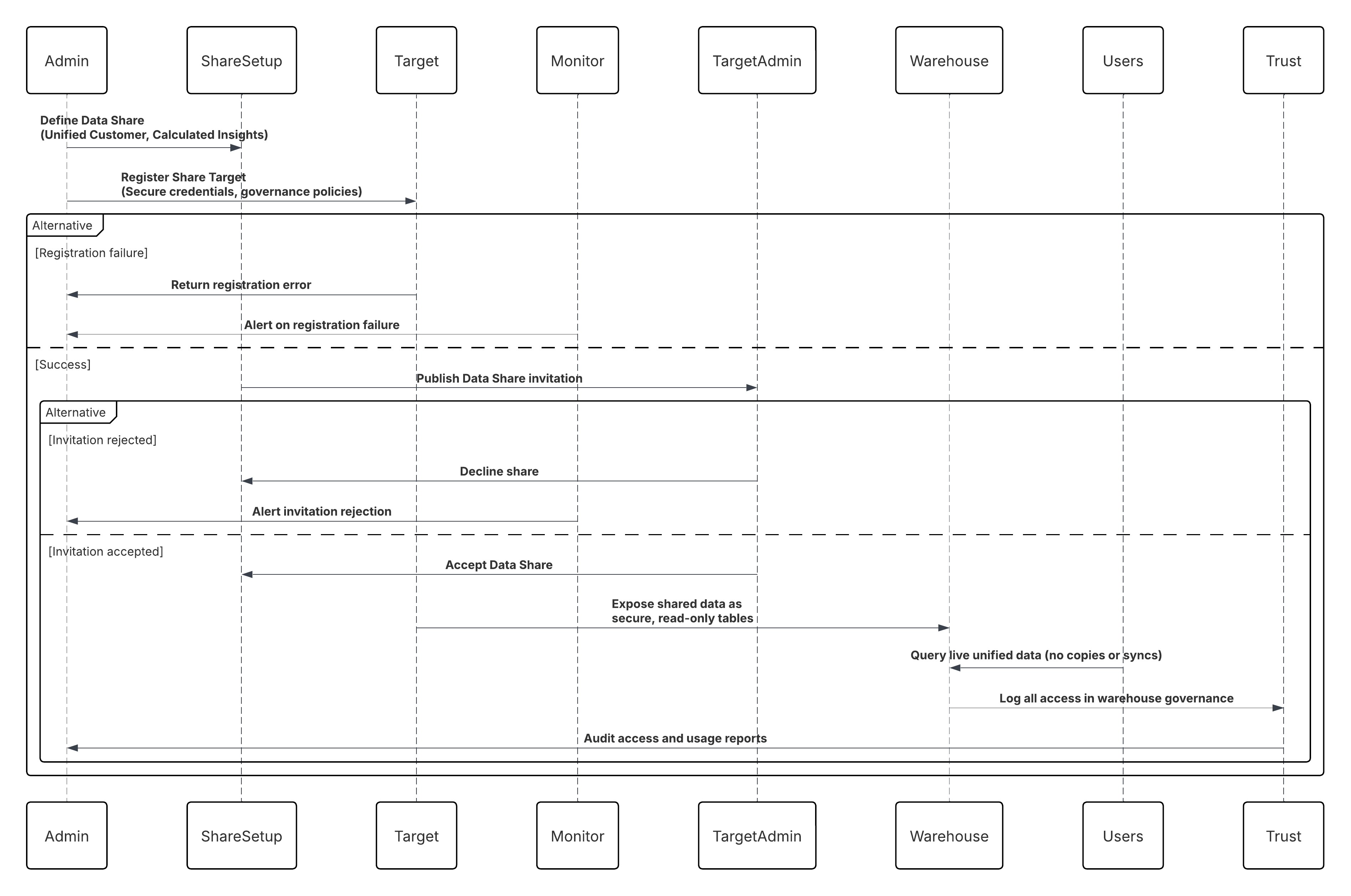

Complex Integration Scenarios