Pattern Overview

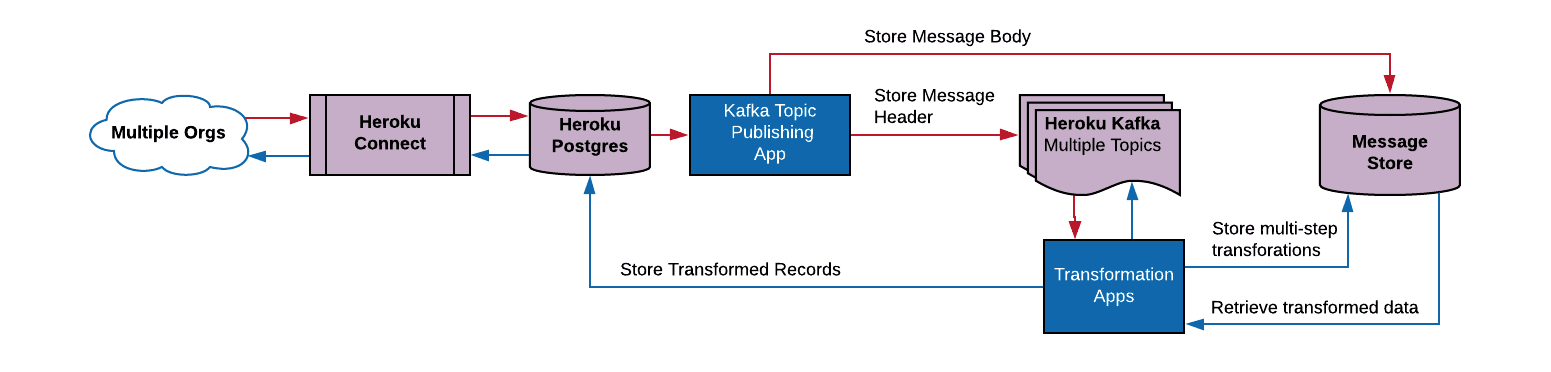

With the Claim Check message pattern, instead of the complete representation of the transformed data being passed through the message pipeline, the message body is stored independently, while a message header is sent through Kafka. With each change to the data, a new representation of that data can be stored, with the final representation being written out to Postgres.

Cross-org integration with the Claim Check pattern.

Advantages to This Approach

There are two key advantages to this. First, it allows for a reduction in the size of messages that are delivered through Kafka. Kafka performance is optimized for 1K messages, and the default limit on message sizes in Kafka on Heroku is 1MB. Smaller messages consume less of Kafka’s storage capacity, which can enable longer message retention if needed.

The second advantage is that this provides a more robust pipeline. If at any point, a part of the transformation pipeline fails, we don’t lose any of the data that was in transit. If we see data corruption happening, we can review the data as it was in the pipeline, and isolate any errors that may be causing it.

How to Implement the Message Store

The message store could be implemented a few ways, but typically this would be an S3 bucket or maybe even a secondary Postgres database. The critical need here is a store that can handle the necessary throughput, and store a potentially large number of messages. Also, since this message store will have a complete representations of the data, it’s important to ensure this store aligns with security policies, including encryption and access controls.

To extend this pattern for more complex data transformation, especially for high volume scenarios, see the Data Transformation at Scale pattern.

About the Contributors

Steve Stearns is a Regional Success Architect Director at Salesforce on the Scale and Availability team. Scale and Availability architects apply the learnings from our large customer engagements to help influence product scale evolution and innovation.